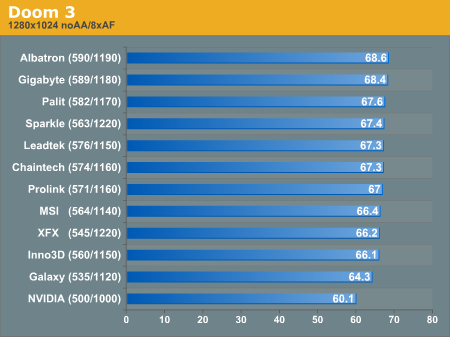

Overclocked Doom 3 Performance

We achieved the minimum overclock configuration of 7% core, and 12% mem was able to bring a 7% performance improvement in Doom 3. We actually see the XFX and Sparkle parts do better than higher core clocked parts because of the added memory bandwidth.

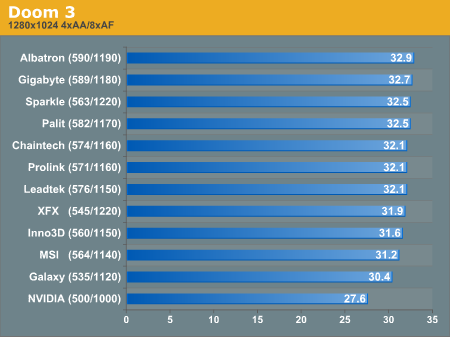

The memory bandwidth need is even stronger in Doom 3 with 4x AA turned on. These benchmark score improvements tend to be more influenced by the percent improvement in memory clock than core clock.

If you don't tend to overclock, and you want to play with AA on, you will absolutely want the XFX part for its 1.6ns GDDR3.

84 Comments

View All Comments

Bonesdad - Wednesday, February 16, 2005 - link

Yes, I too would like to see an update here...have any of the makers attacked the HSF mounting problems?1q3er5 - Tuesday, February 15, 2005 - link

can we please get an update on this article with more cards, and replacements of defective cards?I'm interested in the gigabyte card

Yush - Tuesday, February 8, 2005 - link

Those temperature results are pretty dodge. Surely no regular computer user would have a caseless computer. Those results are only favourable and only shed light on how cool the card CAN be, and not how hot they actually are in a regular scenario. The results would've been much more useful had the temperature been measured inside a case.Andrewliu6294 - Saturday, January 29, 2005 - link

i like the albatron best. Exactly how loud is it? like how many decibels?JClimbs - Thursday, January 27, 2005 - link

Anyone have any information on the Galaxy part? I don't find it in a pricewatch or pricegrabber search at all.Abecedaria - Saturday, January 22, 2005 - link

Hey there. I noticed that Gigabyte seems to have modified their HSI cooling solution. Has anyone had any experience with this? It looks much better.Comments?

http://www.giga-byte.com/VGA/Products/Products_GV-...

abc

levicki - Sunday, January 9, 2005 - link

Derek, do you read your email at all? I got Prolink 6600 GT card and I would like to hear a suggestion on improving the cooling solution. I can confirm that retail card reaches 95 C at full load and idles at 48 C. That is really bad image for nVidia. They should be informed about vendor's doing poor job on cooling design. I mean, you would expect it to be way better because those cards ain't cheap.levicki - Sunday, January 9, 2005 - link

geogecko - Wednesday, January 5, 2005 - link

Derek. Could you speculate on what thermal compound is used to interface between the HSF and the GPU on the XFX card? I e-mailed them, and they won't tell me what it is?! It would be great if it was paste or tape. I need to be able to remove it, and then later, would like to re-install it. I might be able to overlook not having the component video pod on the XFX card, as long as I get an HDTV that supports DVI.Beatnik - Friday, December 31, 2004 - link

I thought I would add about the DUAL-DVI issue, in the new NVIDIA drivers, they show that the second DVI can be used for HDTV output. It appears that even the overscan adjustments are there.

So not having the component "pod" on the XFX card appears to be less of a concern than I thought it might be. It would be nice to hear if someone tried running 1600x1200 + 1600x1200 on the XFX, just to know if the DVI is up to snuff for dual LCD use.