EVE: Online Performance Performance

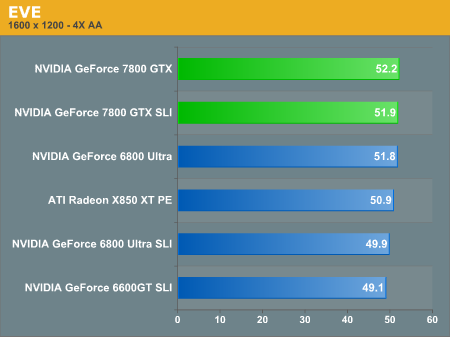

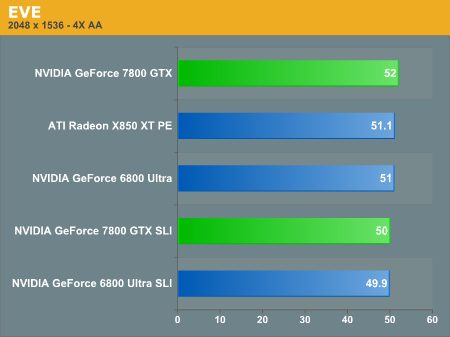

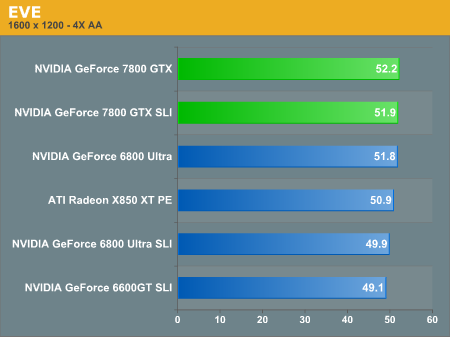

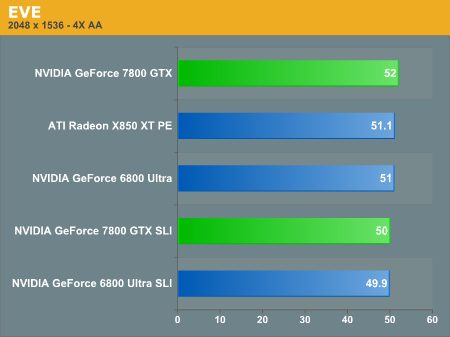

Eve is clearly not the most demanding of games when it comes to graphics cards. In fact, the difference between the various setups is at most 5%. There is also a problem with the SLI support, as it actually slows down performance in Eve. This could be due to the increased demands on the CPU required for dividing the workload between the cards, though we don't see this performance hit as much on the 6800 Ultra cards. Other than the problem with SLI decreasing performance, we're clearly CPU limited in Eve, so if this is your game du jour right now, you probably don't need to worry about a GPU upgrade for a while.

127 Comments

View All Comments

WaltC - Thursday, June 23, 2005 - link

I found this remark really strange and amusing:"It's taken three generations of revisions, augmentation, and massaging to get where we are, but the G70 is a testament to the potential the original NV30 design possessed. Using the knowledge gained from their experiences with NV3x and NV4x, the G70 is a very refined implementation of a well designed part."

Oh, please...nV30 was so poor that it couldn't even run at its factory speeds without problems of all kinds--which is why nVidia officially cancelled nV30 production after shipping a mere few thousand units. JHH, nVidia's CEO went on record saying, "nV30 was a failure" [quote, unquote] at the time. nV30 was [i]not[/i] the foundation for nV40, let alone the G70.

Indeed, if anything could be said to be foundational for both nV40 and G70, it would be ATi's R3x0 design of 2002. G70, imo, has far more in common with R300 than it does nV30. nV30, if you recall, was primarily a DX8 part with some hastily bolted on DX9-ish add-ons done in response to R300 (fully a DX9 part) which had been shipping for nine months prior to nV30 getting out of the door.

In fact, ATi owes its meteoric rise to #1 in the 3d markets over the last three years precisely to the R3x0 products which served as the basis for its later R4x0 architectures. Good riddance to nV3x, I say.

I'm always surprised at the short and selective memories displayed so often by tech writers--really makes me wonder, sometimes, whether they are writing tech copy for their readers or PR copy at the behest of specific companies, if you know what I mean.

JarredWalton - Thursday, June 23, 2005 - link

98 - As far as I know, the power was measured at the wall. We use a device called "Kill A Watt", and despite the rather lame name, it gives accurate results. It's almost impossible to measure the power draw of any single component without some very expensive equipment - you know, the stuff that AMD and Intel use for CPUs. So under load, the CPU and GPU (and RAM and chipset, probably) are using far more power than at idle.PrinceGaz - Thursday, June 23, 2005 - link

I agree, starting at 1600x1200 for a card like this was a good idea. If your monitor can only do 1280x1024, you should consider getting a better one before buying a card like the 7800gtx. As a 2070/2141 owner myself, I know that a good monitor capable of high resolutions is a great investment that lasts a helluva lot longer than graphics cards, which are usually worthless after four or five years (along with most other components).I'm surprised that no one has moaned about the current lack of an AGP version, to go with their Athlon XP 1700+ or whatever ;)

Johnmcl7 - Thursday, June 23, 2005 - link

I think it was spot on to have 1600x1200 as the minimum resolution, given the power of these cards I think 1024x768, no AA/AF results for 3Dmark2003/2005 which have been thrown around are a complete waste of time.John

Frallan - Thursday, June 23, 2005 - link

Good review... And re: the NDA deadlines and the sleapless nights - don't sweat it if a few mistakes are published. The readers here have their heads screwed on the right way and will find the issues for soon enough. And for everyone that does not do 12*16 or 15*20 the answer is simple - U Don't Need The Power!! Save your hard earnt money and get a 6800gt instead.Calin - Thursday, June 23, 2005 - link

Maybe if you could save the game, change the settings and reload it you could obtain images from exactly the same positions. In one of the fence images, the distance to the fence is quite a bit different in different screenshotsCalin - Thursday, June 23, 2005 - link

You had an 7800 SLI? I hate you all:p

xtknight - Thursday, June 23, 2005 - link

Edit: last post correction: actually 21-page report!xtknight - Thursday, June 23, 2005 - link

Jeez...a couple spelling errors here and there...who cares? I'd like to see you type up a 12-page report and get it out the door in a couple days with no grammatical or spelling errors, especially when your main editor is gone. Remember that English study that showed the human brain interpreted words based on patterns and not spelling?I did read the whole review, word-for-word, with little to no trouble. There was not a SINGLE thing I had trouble comprehending. It's a better review than most sites have done which test lower resolutions. I love the non-CPU-limited benchmarks here.

One thing that made me chuckle was "There is clearly a problem with the SLI support in Wolfenstein 3D". That MS-DOS game is in dire need of SLI. (It's abbreviated Wolfenstein: ET. Wolf3D is an oooold Nazi game.)

SDA - Thursday, June 23, 2005 - link

Derek or Jarred or Wesley or someone:Did you measure system power consumption as how much power the computer drew from the wall, or how much power the innards drew from the PSU?

#95, it's a good thing you know enough about running a major hardware site to help them out with your advice! :-)