ATI's New Leader in Graphics Performance: The Radeon X1900 Series

by Derek Wilson & Josh Venning on January 24, 2006 12:00 PM EST- Posted in

- GPUs

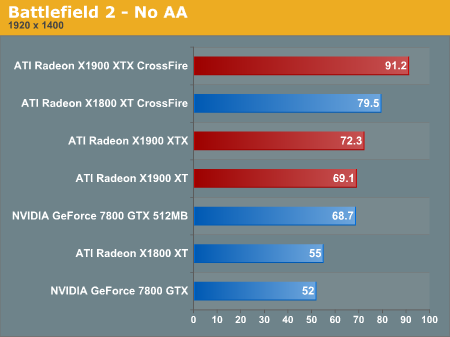

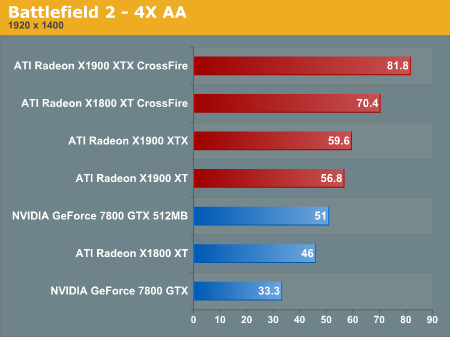

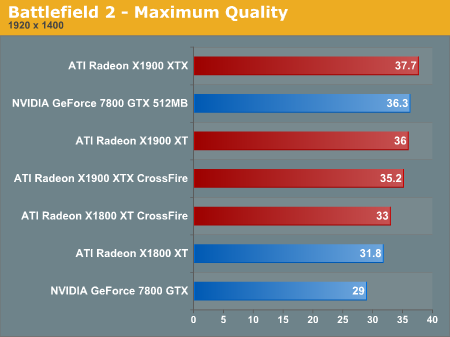

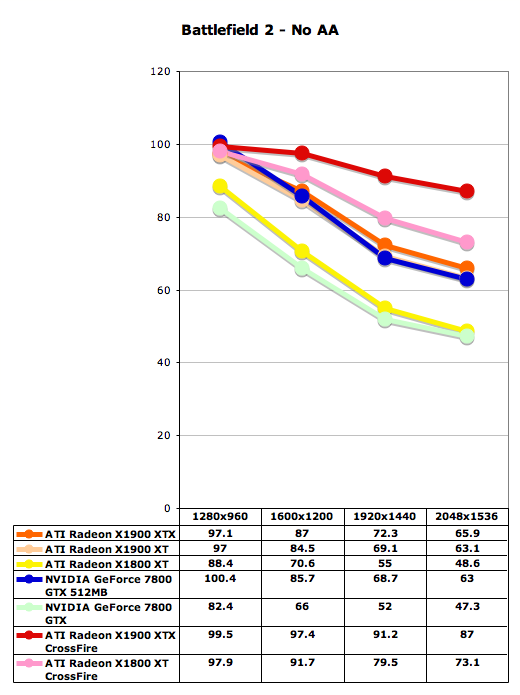

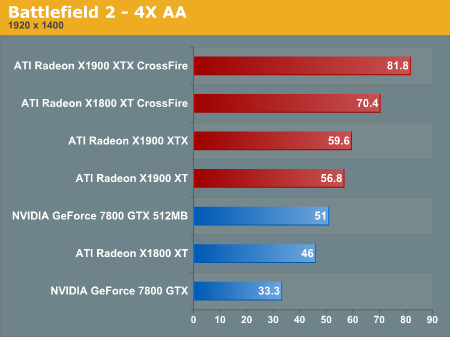

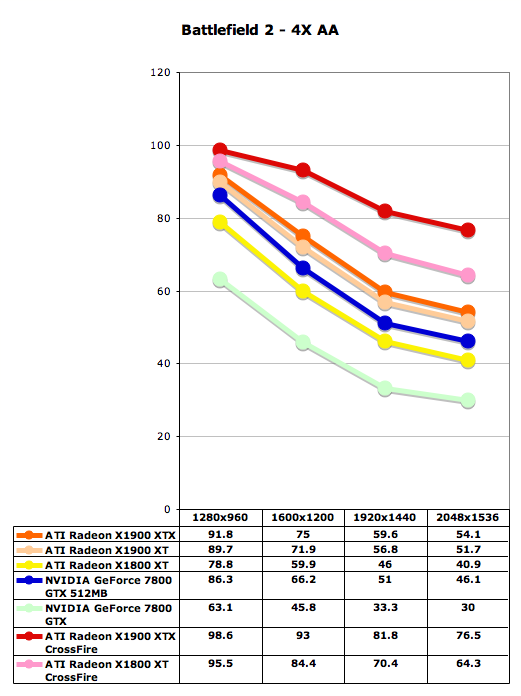

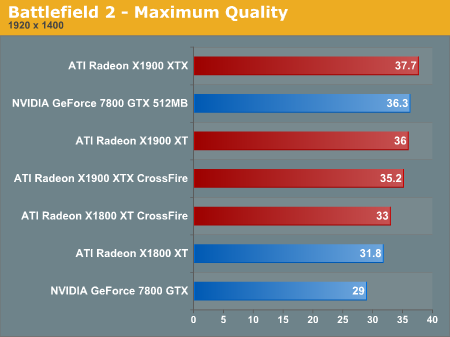

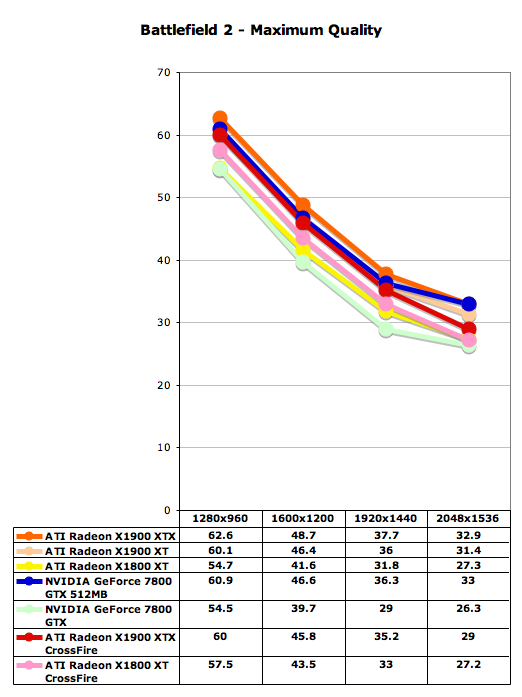

Battlefield 2 Performance

Battlefield 2 has been a standard for performance benchmarks here in the past, and it's probably one of our most important tests. This game still stands out as one of those making best use of the next generation of graphics hardware available right now due to its impressive game engine.

One of the first things to note here is something that is a theme throughout all of our performance tests in this review. In all our tests we find that the X1900 XTX and X1900 XT perform very similar to each other, and in some places differ only by a couple of frames per second. This is significant considering that the X1900 XTX costs about $100 more than the X1900 XT.

Below we have two sets of graphs for three different settings: no AA, 4xAA/8xAF, and maximum quality (higher AA and AF settings in the driver). Note that our benchmark for BF2 had problems with NVIDIA's sli so we were forced to omit these numbers. We can see how with and without AA, both ATI and NVIDIA cards perform very similar to each other on each side. Generally though, since ATI tends to do a little better with AA than NVIDIA, they hold a slight edge here. With the Maximum quality settings, we see a great reduction in performance which is expected. Something to keep in mind is that in the driver options, NVIDIA can enable AA up to 8X, while ATI can only enable up to 6X, so these numbers aren't directly comparable.

Battlefield 2 has been a standard for performance benchmarks here in the past, and it's probably one of our most important tests. This game still stands out as one of those making best use of the next generation of graphics hardware available right now due to its impressive game engine.

One of the first things to note here is something that is a theme throughout all of our performance tests in this review. In all our tests we find that the X1900 XTX and X1900 XT perform very similar to each other, and in some places differ only by a couple of frames per second. This is significant considering that the X1900 XTX costs about $100 more than the X1900 XT.

Below we have two sets of graphs for three different settings: no AA, 4xAA/8xAF, and maximum quality (higher AA and AF settings in the driver). Note that our benchmark for BF2 had problems with NVIDIA's sli so we were forced to omit these numbers. We can see how with and without AA, both ATI and NVIDIA cards perform very similar to each other on each side. Generally though, since ATI tends to do a little better with AA than NVIDIA, they hold a slight edge here. With the Maximum quality settings, we see a great reduction in performance which is expected. Something to keep in mind is that in the driver options, NVIDIA can enable AA up to 8X, while ATI can only enable up to 6X, so these numbers aren't directly comparable.

120 Comments

View All Comments

photoguy99 - Tuesday, January 24, 2006 - link

Why do the editors keep implying the power of cards is "getting ahead" of games when it's actually not even close?- 1600x1200 monitors are pretty affordable

- 8xAA does look better than 4xAA

- It's nice play games with a minimum frame rate of 50-60

Yes these are high end desires, but the X1900XT can't even meet these needs despite it's great power.

Let's face it - the power of cards could double tomorrow and still be put to good use.

mi1stormilst - Tuesday, January 24, 2006 - link

Well said well said my friend...We need to stop being so impressed by so very little. When games look like REAL LIFE does with lots of colors, shading, no jagged edges (unless its from the knife I just plunged into your eye) lol you get the picture.

poohbear - Tuesday, January 24, 2006 - link

technology moves forward at a slower pace then that mates. U expect every vid card to be a 9700pro?! right. there has to be a pace the developers can follow.photoguy99 - Wednesday, January 25, 2006 - link

I think we are agreeing with you -The article authors keep implying they have to struggle to push these cards to their limit because they are getting so powerful so fast.

To your point, I do agree it's moving forward slow - relative to what people can make use of.

For example 90% of Office users can not make use of a faster CPU.

However 90% of gamers could make use of a faster GPU.

So even though GPU performance is doubling faster than CPU performance they should keep it up because we can and will use every ounce of it.

Powermoloch - Tuesday, January 24, 2006 - link

It is great to see that ATi is doing their part right ;)photoguy99 - Tuesday, January 24, 2006 - link

When DX10 is released with vist it seems like this card would be like having SM2.0 - you're behind the curve again.Yea, I know there is always something better around the corner - and I don't recommend waiting if you want a great card now.

But I'm sure some people would like to know.

Spoelie - Thursday, January 26, 2006 - link

Not at all, I do not see DX10 arriving before vista near the end of this year. If it does earlier it will not make any splash whatsoever on game development before that. Even so, you cannot be 'behind' if you're only competitor is still at SM3.0 as well. As far as I can tell, there will be no HARD architectural changes in G71/7900 - they might improve tidbits here and there, like support for AA while doing HDR rendering, but that will be about the full extent of changes.DigitalFreak - Tuesday, January 24, 2006 - link

True, but I'm betting it will be quite a while before we see any DX10 games. I would suspect that the R620/G80 will be DX10 parts.timmiser - Tuesday, January 24, 2006 - link

I expect that Microsoft's Flight Simulator X will be the first DX10 game.hwhacker - Tuesday, January 24, 2006 - link

Question to Derek (or whomever):Perhaps I interpreted something wrong, but is it correct that you're saying X1900 is more of a 12x4 technology (because of fetch4) than the 16x3 we always thought? If so, that would make it A LOT more like Xenos, and perhaps R600, which makes sense, if I recall their ALU setup correctly (Xenos is 16x4, one for stall, so effective 16x3). R520 was 16x1, so...I gotta ask...Does this mean a 16x4 is imminent, or am I just reading the information incorrectly?

If that's true, ATi really did mess with the definition of a pipeline.

I can hear the rumours now...R590 with 16 QUADS, 16 ROPs, 16 TMUs, and 64 pixel processors...Oh yeah, and GDDR4 (on a 80nm process.) You heard it here first. ;)