ATI's New Leader in Graphics Performance: The Radeon X1900 Series

by Derek Wilson & Josh Venning on January 24, 2006 12:00 PM EST- Posted in

- GPUs

Day of Defeat Performance

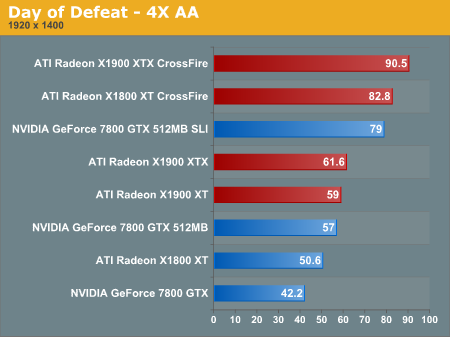

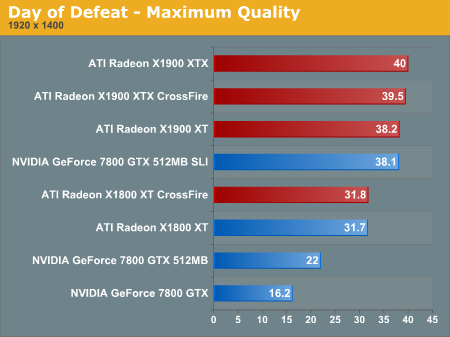

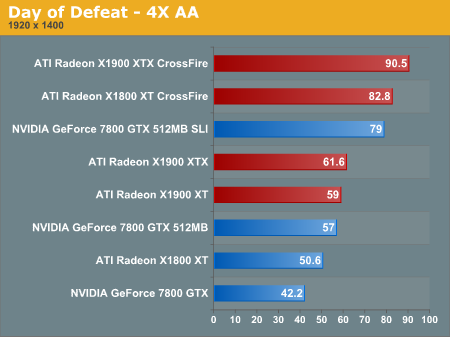

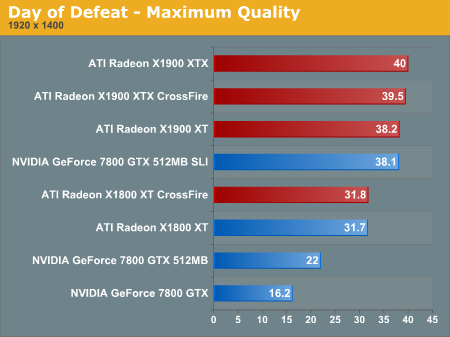

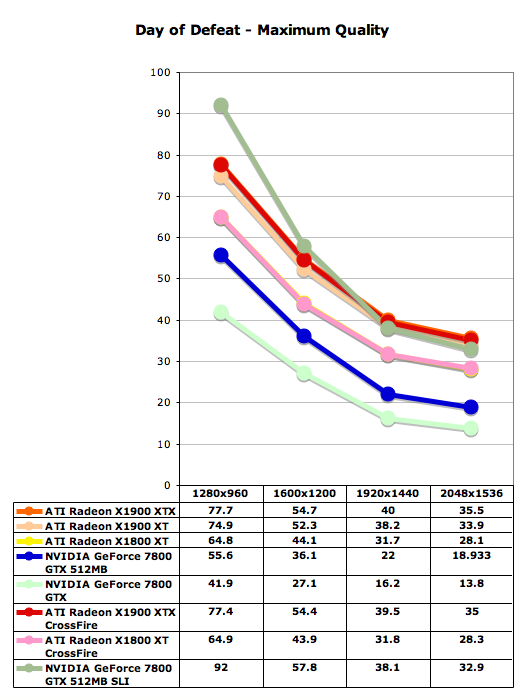

Day of Defeat uses Valve's new HDR technology on the Halflife 2 engine, which makes this game a good performance benchmark. One of the most interesting things to note here is how much of a performance hit NVIDIA takes when maximum quality settings are enabled in the control panel. Specifically, the 7800 GTX 512 gets roughly half the framerate with the max settings enabled as without.

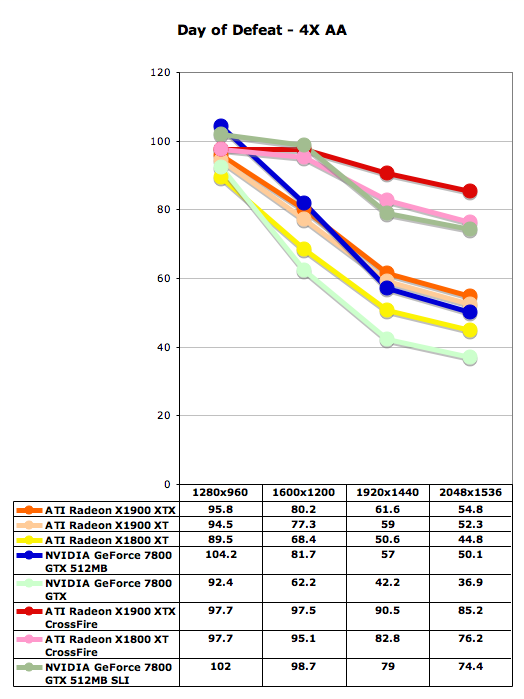

With this game, we've omitted tests without AA enabled because there tends to be a CPU limitation on higher-end cards. Notice that while ATI gets only slightly better scores with AA enabled than NVIDIA, when maximum quality is enabled in the driver, the gap widens considerably and ATI does a much better job across resolutions. ATI gets playable framerates at the highest resolutions with the maximum quality enabled, but without an sli setup, NVIDIA can't really manage similar settings (18.9 fps at 2048x1536 with max quality and 22 fps at 1920x1440 with max quality).

Day of Defeat uses Valve's new HDR technology on the Halflife 2 engine, which makes this game a good performance benchmark. One of the most interesting things to note here is how much of a performance hit NVIDIA takes when maximum quality settings are enabled in the control panel. Specifically, the 7800 GTX 512 gets roughly half the framerate with the max settings enabled as without.

With this game, we've omitted tests without AA enabled because there tends to be a CPU limitation on higher-end cards. Notice that while ATI gets only slightly better scores with AA enabled than NVIDIA, when maximum quality is enabled in the driver, the gap widens considerably and ATI does a much better job across resolutions. ATI gets playable framerates at the highest resolutions with the maximum quality enabled, but without an sli setup, NVIDIA can't really manage similar settings (18.9 fps at 2048x1536 with max quality and 22 fps at 1920x1440 with max quality).

120 Comments

View All Comments

DerekWilson - Tuesday, January 24, 2006 - link

this is where things get a little fuzzy ... when we used to refer to an architecture as being -- for instance -- 16x1 or 8x2, we refered to the pixel shaders ability to texture a pixel. Thus, when an application wanted to perform multitexturing, the hardware would perform about the same -- single pass graphics cut the performance of the 8x2 architecture in half because half the texturing poewr was ... this was much more important for early dx, fixed pipe, or opengl based games. DX9 through all that out the window, as it is now common to see many instructions and cycles spent on any given pixel.in a way, since there are only 16 texture units you might be able to say its something like 48x0.333 ... it really isn't possible to texture all 48 pixels every clock cycle ad infinitum. in an 8x2 architecture you really could texture each of 8 pixels with 2 textures every clock cycle forever.

to put it more plainly, we are now doing much more actual work with the textures we load, so the focus has shifted from "texturing" a pixel to "shading" a pixel ... or fragment ... or whatever you wanna call it.

it's entirely different then xenos as xenos uses a unified shader architecture.

interestingly though, R580 supports a render to vertex buffer feature that allows you to turn your pixel shaders into vertex processors and spit the output straight back into the incoming vertex data.

but i digress ....

aschwabe - Tuesday, January 24, 2006 - link

I'm wondering how a dual 7800GT/7800GTX stacked up against this card.i.e. Is the brand new system I bought literally 24 hours ago going to be able to compete?

Live - Tuesday, January 24, 2006 - link

SLI figures is all over the review. Go read and look at the graphs again.aschwabe - Tuesday, January 24, 2006 - link

Ah, my bad, thanks.DigitalFreak - Tuesday, January 24, 2006 - link

Go check out the review on hardocp.com. They have benchies for both the GTX 256 & GTX 512, SLI & non SLI.Live - Tuesday, January 24, 2006 - link

No my bad. I'm a bit slow. Only the GTX 512 SLI are in there. sorry!Viper4185 - Tuesday, January 24, 2006 - link

Just a few comments (some are being very picky I know)1) Why are you using the latest hardware with and old Seagate 7200.7 drive when the 7200.9 series is available? Also no FX-60?

2) Disappointing to see no power consumption/noise levels in your testing...

3) You are like the first site to show Crossfire XTX benchmarks? I am very confused... I thought there was only a XT Crossfire card so how do you get Crossfire XTX benchmarks?

Otherwise good job :)

DerekWilson - Tuesday, January 24, 2006 - link

crossfire xtx indicates that we ran a 1900 crossfire edition card in conjunction with a 1900 xtx .... this is as opposed to running the crossfire edition card in conjunction with a 1900 xt.crossfire does not synchronize GPU speed, so performance will be (slightly) better when pairing the faster card with the crossfire.

fx-60 is slower than fx-57 for single threaded apps

power consumption was supposed to be included, but we have had some power issues. We will be updating the article as soon as we can -- we didn't want to hold the entire piece in order to wait for power.

harddrive performance is not going to affect anything but load times in our benchmarks.

DigitalFreak - Tuesday, January 24, 2006 - link

See my comment above. They are probably running an XTX card with the Crossfire Edition master card.OrSin - Tuesday, January 24, 2006 - link

Are gamers going insane. $500+ for video card is not a good price. Maybe its jsut me but are bragging rights really worth thats kind of money. Even if you played a game thats needs it you should be pissed at the game company thats puts a blot mess thats needs a $500 card.