DX10 for the Masses: NVIDIA 8600 and 8500 Series Launch

by Derek Wilson on April 17, 2007 9:00 AM EST- Posted in

- GPUs

The Cards and The Test

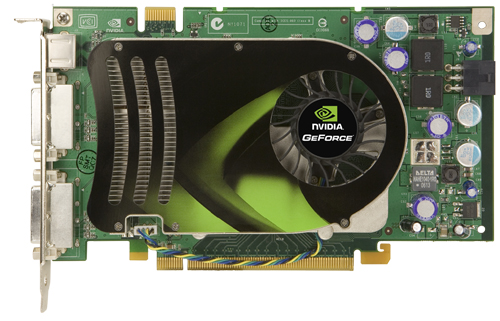

Both of our cards, the 8600 GT and the 8600 GTS, feature two DVI ports and a 7-pin video port. The GTS requires a 6-pin PCIe power connector, while the GT is capable of running using only the power provided by the PCIe slot. Each card is a single slot solution, and there isn't really anything surprising about the hardware. Here's a look at what we're working with:

In testing the 8600 cards, we used 158.16 drivers. Because we tested under Windows XP, we had to use the 93 series driver for our 7 series parts, the 97 series driver for our 8800 parts and the 158.16 beta driver for our new 8600 hardware. While Vista drivers are unified and the 8800 drivers were recently updated, GeForce 7 series running Windows XP (the vast majority of NVIDIA's customers) have been stuck with the same driver revision since early November last year. We are certainly hoping that NVIDIA will release a new unified Windows XP driver soon. Testing with three different drivers from one hardware manufacturer is less than optimal.

We haven't done any Windows Vista testing this time around, as we still care about maximum performance and testing in the environment most people will be using their hardware. This is not to say that we are ignoring Vista: we will be looking into DX10 benchmarks in the very near future. Right now, there is just no reason to move our testing to a new platform.

Here's our test setup:

The latest 100 series drivers do expose an issue with BF2 that enables 16xCSAA when 4xMSAA is selected in game. To combat this, we used the control panel to select 4xAA under the "enhance" application setting.

All of our games were tested using the highest selectable in-game quality options with the exception of Rainbow Six: Vegas. Our 8600 hardware had a hard time keeping up with hardware skinning enabled even at 1024x768. In light of this, we tested with hardware skinning off and medium blur. We will be doing a follow up performance article including more games. We are looking at newer titles like Supreme Commander, S.T.A.L.K.E.R., and Command & Conquer 3. We will also follow up with video decode performance.

For the comparisons that follow, the 8600 GTS is priced similarly to AMD's X1950 Pro, while the 8600 GT competes with the X1950 GT.

Both of our cards, the 8600 GT and the 8600 GTS, feature two DVI ports and a 7-pin video port. The GTS requires a 6-pin PCIe power connector, while the GT is capable of running using only the power provided by the PCIe slot. Each card is a single slot solution, and there isn't really anything surprising about the hardware. Here's a look at what we're working with:

In testing the 8600 cards, we used 158.16 drivers. Because we tested under Windows XP, we had to use the 93 series driver for our 7 series parts, the 97 series driver for our 8800 parts and the 158.16 beta driver for our new 8600 hardware. While Vista drivers are unified and the 8800 drivers were recently updated, GeForce 7 series running Windows XP (the vast majority of NVIDIA's customers) have been stuck with the same driver revision since early November last year. We are certainly hoping that NVIDIA will release a new unified Windows XP driver soon. Testing with three different drivers from one hardware manufacturer is less than optimal.

We haven't done any Windows Vista testing this time around, as we still care about maximum performance and testing in the environment most people will be using their hardware. This is not to say that we are ignoring Vista: we will be looking into DX10 benchmarks in the very near future. Right now, there is just no reason to move our testing to a new platform.

Here's our test setup:

| System Test Configuration | |

| CPU: | Intel Core 2 Extreme X6800 (2.93GHz/4MB) |

| Motherboard: | EVGA nForce 680i SLI |

| Chipset: | NVIDIA nForce 680i SLI |

| Chipset Drivers: | NVIDIA nForce 9.35 |

| Hard Disk: | Seagate 7200.7 160GB SATA |

| Memory: | Corsair XMS2 DDR2-800 4-4-4-12 (1GB x 2) |

| Video Card: | Various |

| Video Drivers: | ATI Catalyst 7.3 NVIDIA ForceWare 93.71 (G70) NVIDIA ForceWare 97.94 (G80) NVIDIA ForceWare 158.16 (8600) |

| Desktop Resolution: | 1280 x 800 - 32-bit @ 60Hz |

| OS: | Windows XP Professional SP2 |

The latest 100 series drivers do expose an issue with BF2 that enables 16xCSAA when 4xMSAA is selected in game. To combat this, we used the control panel to select 4xAA under the "enhance" application setting.

All of our games were tested using the highest selectable in-game quality options with the exception of Rainbow Six: Vegas. Our 8600 hardware had a hard time keeping up with hardware skinning enabled even at 1024x768. In light of this, we tested with hardware skinning off and medium blur. We will be doing a follow up performance article including more games. We are looking at newer titles like Supreme Commander, S.T.A.L.K.E.R., and Command & Conquer 3. We will also follow up with video decode performance.

For the comparisons that follow, the 8600 GTS is priced similarly to AMD's X1950 Pro, while the 8600 GT competes with the X1950 GT.

60 Comments

View All Comments

shabby - Tuesday, April 17, 2007 - link

3dmark vista edition of course!munky - Tuesday, April 17, 2007 - link

Why did you not include the x1950xt in the test lineup? It can also be had for about $200 now, like the 7950gt. You didn't want to make the 8600 series look worse than they already do, or what?DerekWilson - Tuesday, April 17, 2007 - link

Wow, sorry for the ommission -- I was trying to include specific comparison points -- 3 from AMD and 3 from NVIDIA, but this one just slipped through the cracks. Sorry. It will be included in my performance update.Elwe - Tuesday, April 17, 2007 - link

Now, now guys. True that these cards are not going to be what many of you want (there are some good reasons to stay with what you have considering the performance differential of several of the last generation cards). And it is clear that these cards will not touch the 8800 cards (from what I can tell, the only these these do better are are 100% Pure HD on the card, which I guess is because these might be paired with not-so-great cpus.But for some of us, they might work. I recently bought a Dell 390 workstation. I packed it with fast drives, QX6700 cpu, and 4gb ram. There were very few BTO graphics choices, and most centered around the Pro market (this is a "workstation" after all). These is a new machine, and quite powerful! I want to work and play on this box. Because of the relatively week power supply (rated at 375 watts or something like that) and because I need both available non-graphics PCIe slots (if you put it an 8800 GTS, even if you changed the power supply, this type of dual slot card will cover one of those slots), I have been waiting for something reasonably powerful to come along (again, I am not going to just work on this box; I would like to play UT2k7, too:). Since I run Linux, I was trying to stick with the Nvidia line (my experience is that they have better drivers for this platform, but perhaps ATI has stepped up in the last half year or so). I could have gone with the 79xx line (single slot), but I wanted to see what the new generation would bring. Depending on what you need/want, I think either a slightly-used 7950GT OC or a 8600 GTS would work just fine for me. It does not seem unreasonable to me that in some things the older higher end card is faster than the newer mid range card, and vice versa. But I did not see any benchmark where the 79xx line whooped the 8600 GTS thoroughly (like what happend with several benchmarks comparing the 8800 and 8600).

I would say that the only immediate problem I might have with using the 8600 GTS is for gaming at high resolutions. I have a Dell 2407, and Anandtech's benchmarks make it clear I should not be gaming at that high a resolution. Bummer. The 7950 GT OC might very well be the better option here.

In an ideal world, I really would like the power of an 8800 (and, fortunately, I can pay for it). But I really need the PCIe slot more, and changing out the power supply would add even more cost. I could have gotten another Dell model (like the XPS 710 or the Precision 490)--and I am thinking about just that. But I got the 390 for what I considered good reasons (a damned sight cheaper than the 490, and I have no need of another cpu socket when I can have 4 cores in one socket), and the XPS 710 did not have BTO storage options that I wanted (not sure why they could not design that thing to have more than two internal drives--the thing is big enough; maybe most games do not need it, as that is what the machine was designed for). I bet I am not the only one.

GhandiInstinct - Tuesday, April 17, 2007 - link

Masses would include AGP cards...I see no AGP DX10 cards...

aka1nas - Tuesday, April 17, 2007 - link

The "masses" don't build their own computers, and thus have long since stopped purchasing machines with AGP slots.GhandiInstinct - Tuesday, April 17, 2007 - link

The "masses" also don't go hunting for DX10 cards to add FPS to their hardcore Dell and Gateway gaming rigs.Be honest with yourself, the people going for these cards are custom riggers.

AGP DX10 please, theres hundreds of thousands with Pentium 3.4 Northwoods that know their processors will run BioShock well, but they need DX10 without paying for a new motherboard, DDR2, and everything else, including Vista!!!

JarredWalton - Tuesday, April 17, 2007 - link

Actually, I don't think anyone "knows" whether or not any current system will run BioShock well or not. Let's wait for the game to appear at least. We're still at least four months away (assuming they hit the current release date).While I can understand people complaining about the lack of AGP cards, let's be honest: why should either company invest a lot of money in an old platform? It takes time to make the AGP cards and more time to make sure the drivers all work right. At some point, the old tech has to be left behind. The cost to transition from an AGP setup to a PCIe setup is often under $100, so if the AGP cards had a $50 price premium you'd only save yourself $50 and still be stuck with the older platform.

I figure AMD/ATI and NVIDIA basically ignored the complaints with X1900/7900 class hardware (the best was several notches below what was available on PCIe), and at this point I think they're done. I'd even go so far as to say we're probably now at the point where an AGP platform would start to be a bottleneck with current hardware - maybe not midrange stuff, but certainly the high-end offerings.

Let's put it another way: why can't I get something faster than a single core 2.4GHz 1MB cache Athlon 64 3700+ for socket 754? Why can't I get Pentium D or Core 2 Duo for socket 478? Why do we need new motherboards for Core 2 Duo when socket 775 is used sing 915/925? Intel and AMD have forced transitions on users for years, and after a long run it's tough to say that AGP hasn't fulfilled its purpose. Such is the life of PC hardware.

GhandiInstinct - Tuesday, April 17, 2007 - link

Good points, I agree with them all.Basically, I feel that my 3.2 northwood, 2GB ram is worth salvaging for BioShock and Hellgate, obviously not Crysis, but it's convenient that it will be released in 08.

I figure I can hold out 8 more months, save up during this time, and switch to quad and DDR3.

I service hundreds of clients a week in tech support that have AGP setups, and I don't think Nvidia and ATi will abandon AGP with DX10, especially since there is speculation to believe they will be releasing this cards in the future: http://www.theinquirer.net/default.aspx?article=37...">http://www.theinquirer.net/default.aspx?article=37...

:)

LoneWolf15 - Tuesday, April 17, 2007 - link

While it would be nice to have this hardware in NVIDIA's higher end offerings, this technology arguably makes more sense in mainstream parts. High end, expensive graphics cards are usually paired with high end expensive CPUs and lots of RAM. The decode assistance that these higher end cards offer is more than enough to enable a high end CPU to handle the hardest hitting HD videos. With mainstream graphics hardware providing a huge amount of decode assistance, the lower end CPUs that people pair with this hardware will benefit greatly.IMO, this is absolute bollocks.

If I'm paying for nVidia's high-end stuff, I expect high-end everything. And this is at least the second time nVidia has only improved video on their second-round or midrange parts (anybody remember NV40/45?).

I game some, and I want good performance for that. But, I also have a 1920x1200 display, and I want the best video playback experience I can get on it. I also want the lower CPU-usage so I can playback video while my system is left to do other processor-intensive tasks in the background.

Once again, nVidia has really disappointed me in this area. In comparison, ATI seems to be much better at making sure their full range of cards supports their best video technologies. This (along with nVidia's driver development) continues to make the G80 seem like a "rushed-out-the-door" product.