ATI Radeon HD 2900 XT: Calling a Spade a Spade

by Derek Wilson on May 14, 2007 12:04 PM EST- Posted in

- GPUs

Beyond the Shader: Coloring Pixels

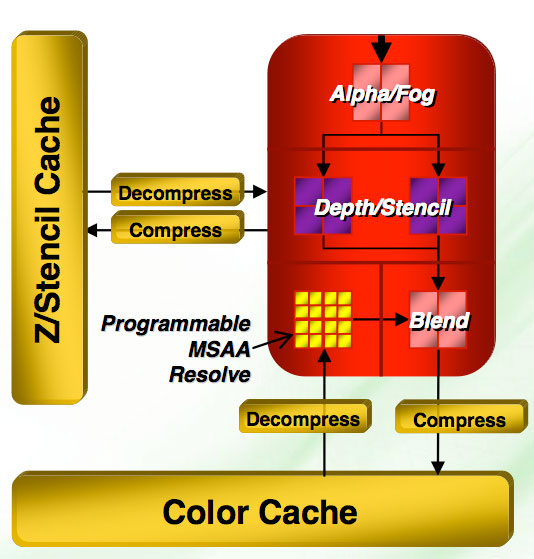

We can't ignore the last few steps in the rendering pipeline, as AMD has also updated their render back ends (analogous to NVIDIA's ROPs) which are responsible for determining the visibility of each fragment and the final color of each pixel on the screen. Beyond this, the render back ends handle compression and decompression, render to texture functionality, MRTs, framebuffer formats, and usually AA.

Once again, one of the important things to note is that R600 only has four render back ends. This means we will only see 16 pixels complete per clock at maximum, just like the R580. However, AMD has included double the Z/stencil hardware so that we can get up to 32 total Z/stencil ops out of the render back ends to improve stencil shadow operations among other things. Pure fill rate hasn't really mattered in a while, while Z/stencil capability remains important. But will only four render back ends be enough?

Efficiency has been improved on the render back ends, but with the potential of completing 64 threads per clock from the shader hardware, they will need to really work to keep up. R600 has the ability to display floating point formats from 11:11:10 up to 128-bit fp. DX10 requires eight MRTs now, and we've got them. We also get more efficient render to texture features which should help enable more complex effects to process faster.

Z/Stencil Hardware

As far as Z/stencil hardware is concerned, compression has gotten a boost up to 16:1 rather than 8:1 on the X1k series. Depth tests can be limited to a specific range programmatically which can speed up stencil shadows. Our Z-buffer is now 32-bit floating point rather than 24-bit. Hierarchical Z has been enhanced to handle some situations where it was unable to assist in rendering, and AMD has added a hierarchical stencil buffer as well.

AMD is introducing something called Re-Z which is designed to also help with the problem Early-Z has in not being able to handle shaders that update Z data. R600 is able to check Z values before a shader runs as well as after the Z value has been changed in the shader. This allows AMD to throw out pixels that are updated to be out of view without sending them to the render back ends for evaluation.

If we compare this setup with G80, we're not as worried as we are about texture capability. G80 can complete 24 pixels per clock (4 pixels per ROP with six ROPs). Like R600, G80 is capable of 2x Z-only performance with 48 Z/stencil operations per clock with AA enabled. When AA is disabled, the hardware is capable of 192 Z-only samples per clock. The ratio of running threads to ROPs is actually worse on G80 than on R600. At the same time, G80 does offer a higher overall fill rate based on potential pixels per clock and clock speed.

86 Comments

View All Comments

dragonsqrrl - Thursday, August 25, 2011 - link

You forgot c).-if you're an ATI fanboy

vijay333 - Monday, May 14, 2007 - link

http://www.randomhouse.com/wotd/index.pperl?date=1...">http://www.randomhouse.com/wotd/index.pperl?date=1..."the expression to call a spade a spade is thousands of years old and etymologically has nothing whatsoever to do with any racial sentiment."

strikeback03 - Wednesday, May 16, 2007 - link

What about in Euchre, where a spade can be a club (and vice versa)?johnsonx - Monday, May 14, 2007 - link

Just wait until AT refers to AMD's marketing budget as 'niggardly'...bldckstark - Monday, May 14, 2007 - link

What do shovels have to do with race?Stan11003 - Monday, May 14, 2007 - link

My big hope out all of this that the ATI part forces the Nvidia parts lower so I can use my upgrade option from EVGA to get a nice 8800 GTX instead of my 8800 GTS ACS3 320. However with a quad core and a decent 2GB I have no gaming issues at all. I play at 1600x1200(when that become a low rez?) and everything is butter smooth. Without newer titles all this hardware is a waist anyways.Gul Westfale - Monday, May 14, 2007 - link

the article says that the part is not a failure, but i disagree. i switched from a radeon 1950pro to an nvidia geforce 8800GTS 320MB about a mont ago, and i paid only $350US for it. now i see that it still outperforms the new 2900...one of my friends wanted to wait to buy a new card, he said he hoped that the ATI part was going to be faster. now he says he will just buy the 8800GTS 320, since ATI have failed.

if they can bring out a part that competes well with the 8800GTS and price it similarly or lower then it would be worth buying, but until then i will stick with nvidia. better performance, better price, and better drivers... why would anyone buy the ATI card now?

ncage - Monday, May 14, 2007 - link

My conclusion is to wait. All of the recent GPU do great with dx9...the question is how will they do with dx10? I think its best to wait for dx10 titles to come out. I think crysis would be a PERFECT test.wingless - Monday, May 14, 2007 - link

I agree with you. Crysis is going to be the benchmark for these DX10 cards. Its hard to tell both Nvidia and AMD's DX10 performance with these current, first generation DX10 titles (most of which have a DX9 version) because they don't fully take advantage of all the power on both the G80 or R600 yet. Its true that Crysis will have a DX9 version as well but the developer stated there are some big differences in code. I'm an Nvidia fanboy but I'm disappointed with the Pure Video and HDMI support on the 8800 series cards. ATI got this worked out with their great AVIVO and their nice HDMI implementation but for now Nvidia is still the performance champ with "simpler" hardware. The G80 and R600 continue the traditions of their manufacturers. Nvidia has always been about raw power and all out speed with few bells and whistles. ATI is all about refinement, bells and whistles, innovations, and unproven new methods which may make or break them.All I really want to wait for is to see how developers embrace CUDA or ATI's setup for PHYSICS PROCESSING! Both companies seem to have well thought out methods to do physics and I cant wait to see that showdown. AGEIA and HAVOK need to hop on-board and get some software support for all this good hardware potential they have to play with. Physics is the next big gimmick and you know how much we all love gimmicks (just like good 'ole 3D acceleration 10 years ago).

poohbear - Monday, May 14, 2007 - link

they dont make a profit from high end parts that's why they're not bothering w/ it? that's AMD's story? so why bother having an FX line w/ their cpus?