The Radeon HD 4850 & 4870: AMD Wins at $199 and $299

by Anand Lal Shimpi & Derek Wilson on June 25, 2008 12:00 AM EST- Posted in

- GPUs

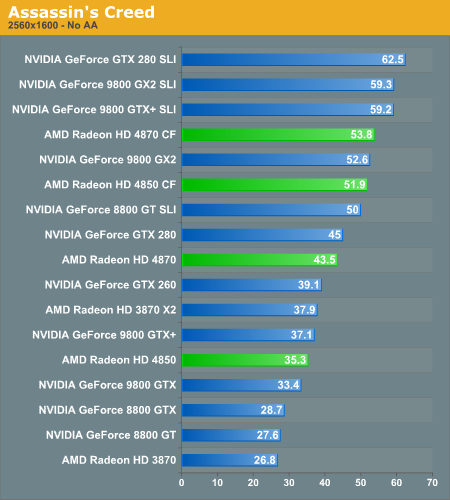

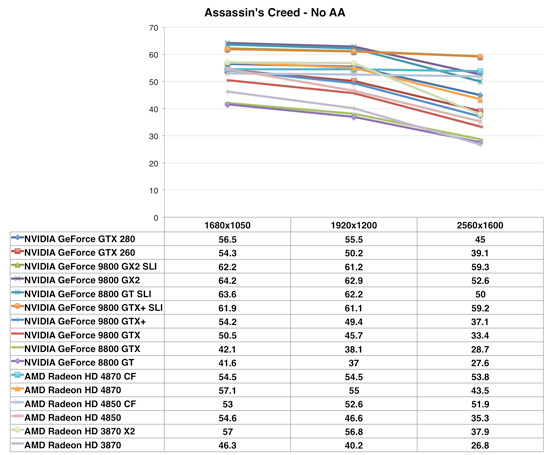

Assassin's Creed

Even at 2560 x 1600 the high end configurations are bumping into a frame rate limiter, any of the very high end setups are capable of running Assassin's Creed very well.

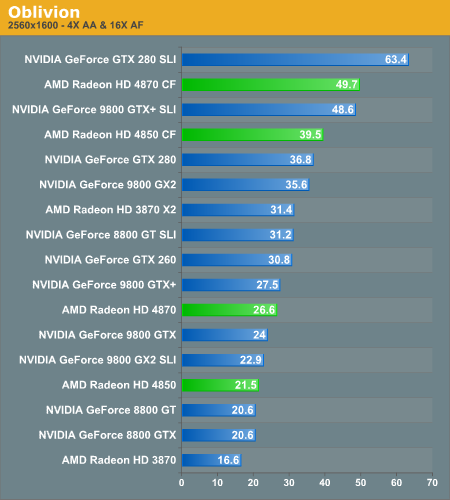

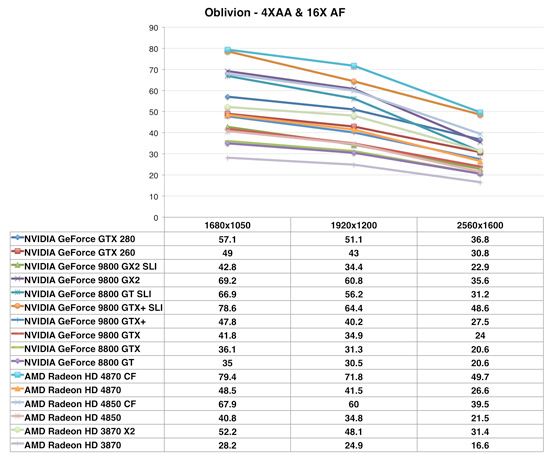

Oblivion

The GeForce 9800 GTX+ does very well in Oblivion and a pair of them actually give the 4870 CF a run for its money, especially given that the GTX+ is a bit cheaper. While it's not the trend, it does illustrate that GPU performance can really vary from one application to the next. The Radeon HD 4870 is still faster, overall, just not in this case where it performs equally to a GTX+.

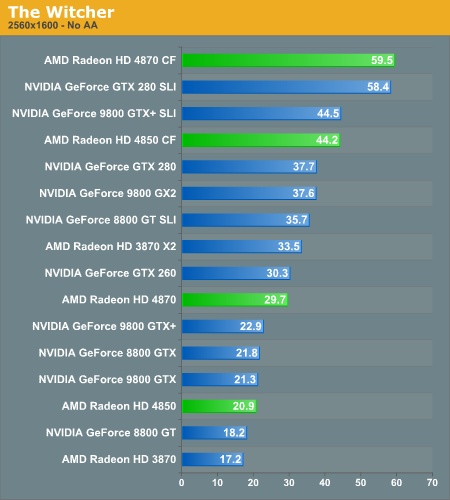

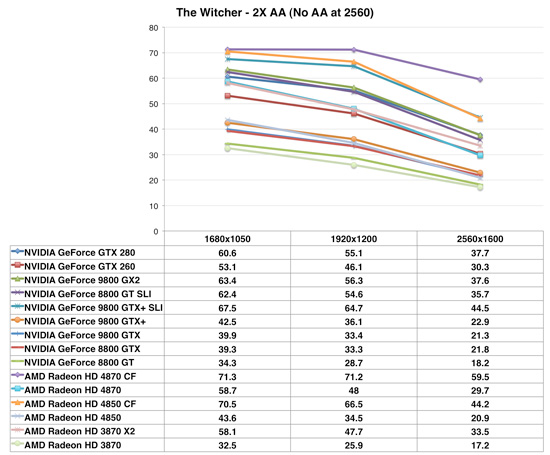

The Witcher

We've said it over and over again: while CrossFire doesn't scale as consistently as SLI, when it does, it has the potential to outscale SLI, and The Witcher is the perfect example of that. While the GeForce GTX 280 sees performance go up 55% from one to two cards, the Radeon HD 4870 sees a full 100% increase in performance.

It is worth noting that we are able to see these performance gains due to a late driver drop by AMD that enables CrossFire support in The Witcher. We do hope that AMD looks at enabling CrossFire in games other than those we test, but we do appreciate the quick turnaround in enabling support - at least once it was brought to their attention.

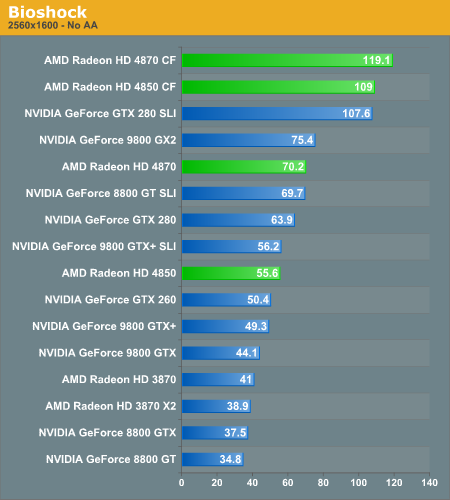

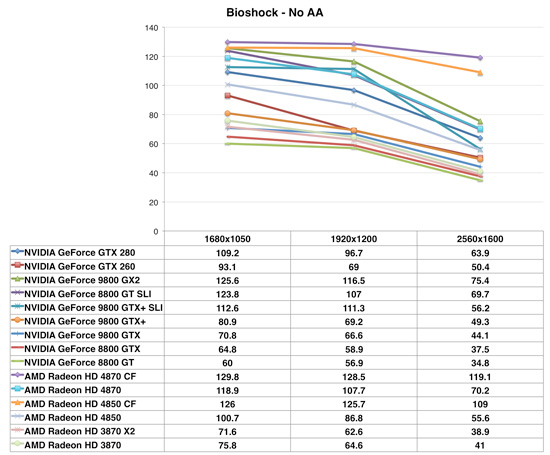

Bioshock

The Radeon HD 4000 series did very well in Bioshock in our single-GPU tests, but pair two of these things up and we're now setting performance records.

215 Comments

View All Comments

araczynski - Wednesday, June 25, 2008 - link

...as more and more people are hooking up their graphics cards to big HDTVs instead of wasting time with little monitors, i keep hoping to find out whether the 9800gx2/4800 lines have proper 1080p scaling/synching with the tvs? for example the 8800 line from nvidia seems to butcher 1080p with tv's.anyone care to speak from experience?

DerekWilson - Wednesday, June 25, 2008 - link

i havent had any problem with any modern graphics card (dvi or hdmi) and digital hdtvsi haven't really played with analog for a long time and i'm not sure how either amd or nvidia handle analog issues like overscan and timing.

araczynski - Wednesday, June 25, 2008 - link

interesting, what cards have you worked with? i have the 8800gts512 right now and have the same problem as with the 7900gtx previously. when i select 1080p for the resolution (which the drivers recognize the tv being capable of as it lists it as the native resolution) i get a washed out messy result where the contrast/brightness is completely maxed (sliders do little to help) as well as the whole overscan thing that forces me to shrink the displayed image down to fit the actual tv (with the nvidia driver utility). 1600x900 can usually be tolerable in XP (not in vista for some reason) and 1080p is just downright painful.i suppose it could by my dvi to hdmi cable? its a short run, but who knows... i just remember reading a bit on the nvidia forums that this is a known issue with the 8800 line, so was curious as to how the 9800 line or even the 4800 line handle it.

but as the previous guy mentioned, ATI does tend to do the TV stuff much better than nvidia ever did... maybe 4850 crossfire will be in my rig soon... unless i hear more about the 4870x2 soon...

ChronoReverse - Wednesday, June 25, 2008 - link

ATI cards tend to do the TV stuff properlyFXi - Wednesday, June 25, 2008 - link

If Nvidia doesn't release SLI to Intel chipsets (and on a $/perf ratio it might not even help if it does), the 4870 in CF is going to stop sales of the 260's into the ground.Releasing SLI on Intel and easing the price might help ease that problem, but of course they won't do it. Looks like ATI hasn't just come back, they've got a very, very good chip on their hands.

Powervano - Wednesday, June 25, 2008 - link

Anand and DerekWhat about temperatures of HD4870 under IDLE and LOAD? page 21 only shows power comsumption.

iwodo - Wednesday, June 25, 2008 - link

Given how ATI architecture greatly rely on maximizing its Shader use, wouldn't driver optimization be much more important then Nvidia in this regard?And is ATI going about Nvidia CUDA? Given CUDA now have a much bigger exposure then how ever ATI is offering.. CAL or CTM.. i dont even know now.

DerekWilson - Wednesday, June 25, 2008 - link

getting exposure for AMD's own GPGPU solutions and tools is going to be though, especially in light of Tesla and the momentum NVIDIA is building in the higher performance areas.they've just got to keep at it.

but i think their best hope is in Apple right now with OpenCL (as has been mentioned above) ...

certainly AMD need to keep pushing their GPU compute solutions, and trying to get people to build real apps that they can point to (like folding) and say "hey look we do this well too" ...

but in the long term i think NVIDIA's got the better marketing there (both to consumers and developers) and it's not likely going to be until a single compute language emerges as the dominant one that we see level competition.

Amiga500 - Wednesday, June 25, 2008 - link

AMD are going to continue to use the open source alternative - Open CL.In a relatively fledgling program environment, it makes all the sense in the world for developers to use the open source option, as compatibility and interoperability can be assured, unlike older environments like graphics APIs.

OSX v10.6 (snow lepoard) will use Open CL.

DerekWilson - Wednesday, June 25, 2008 - link

OpenCL isn't "open source" ...Apple is trying to create an industry standard heterogeneous compute language.

What we need is a compute language that isn't "owned" by a specific hardware maker. The problem is that NVIDIA has the power to redefine the CUDA language as it moves forward to better fit their architecture. Whether they would do this or not is irrelevant in light of the fact that it makes no sense for a competitor to adopt the solution if the possibility exists.

If NVIDIA wants to advance the industry, eventually they'll try and get CUDA ANSI / ISO certified or try to form an industry working group to refine and standardize it. While they have the exposure and power in CUDA and Tesla they won't really be interested in doing this (at least that's our prediction).

Apple is starting from a standards centric view and I hope they will help build a heterogeneous computing language that combines the high points of all the different solutions out there now into something that's easy to develop or and that can generate code to run well on all architectures.

but we'll have to wait and see.