NVIDIA GeForce GTS 250: A Rebadged 9800 GTX+

by Derek Wilson on March 3, 2009 3:00 AM EST- Posted in

- GPUs

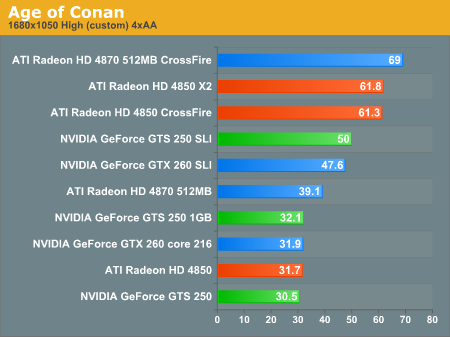

Age of Conan Performance

There is one caveat with this test -- the GTS 250 1GB was run with the latest update to Age of Conan which may or may not have affected performance. The settings were adjusted to what they were before the update (high settings with bloom, 4xAA, SM3.0, and advanced transparency).

1680x1050 1920x1200 2560x1600

Age of Conan comes out as basically a tie between the 4850 and the GTS 250 at 1680x1050. If the performance test we ran on the 1GB GTS 250 are on par with the previous tests, then it's basically a tie at playable resolutions between the 1GB GTS 250 and the 512MB 4850, and the extra memory on the GTS 250 does help it scale better as resolution increases.

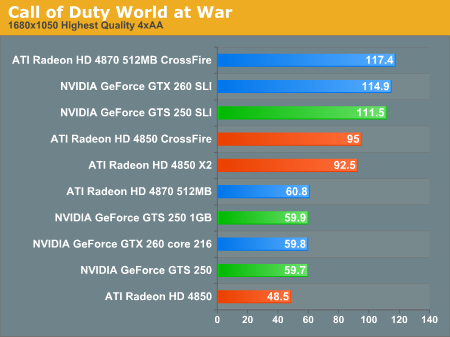

Call of Duty World at War Performance

NVIDIA hardware sweeps this benchmark with the GTS 250 remaining playable all the way up to 2560x1600 while the 4850 can't hold on past 1920x1200. As there is very little difference between the both the GTS 250 / 1GB and the 4850 CrossFire / X2, it doesn't seem like a 1GB 4850 would help very much here either.

1680x1050 1920x1200 2560x1600

103 Comments

View All Comments

sbuckler - Wednesday, March 4, 2009 - link

I don't understand the hate. They rebranded but more importantly dropped the price too. This forced ati to drop the price of the 4850 and 4870. That's a straight win for the consumer - whether you want ati or nvidia in your machine.SiliconDoc - Wednesday, March 18, 2009 - link

Oh, now stop that silliness ! Everyone worthy knows only ati drops prices and causes the evil green beast to careen from another fatal blow. ( the evil beast has more than one life, of course - the death blow has been delivered by the sainted ati many times, there's even a shrine erected as proof ).Besides, increasing memory, creating a better core rollout, redoing the pcb for better efficiency and pricing, THAT ALL SUCKS - because the evil green beast sucks, ok ?

Now folllow the pack over the edge of the cliff into total and permanent darkness, please. You know when it's dark red looks black, yes, isn't that cool ? Ha ! ati wins again ! /sarc

Hrel - Wednesday, March 4, 2009 - link

I can't wait to read your articles on the new mobile GPU's and I'm REALLY looking forward to a comparison between 1GB 4850 and GTS250 cards; as well as a comparison between the new design for the GTS250 512MB and the HD4850 512MB.It seems to me, if Nvidia wanted to do right by their customers, that they'd just scrap the 1GB GTS250 and offer the GTX260 Core216 at the $150 price point, it has a little less RAM so there's a little savings for them there. But then, that's if they wanted to do the right thing for their customers.

It's about time they introduced some new mobile GPU's, I hope power consumption and price is down as performance goes up!

I look forward to AMD releasing a new GPU architecture that uses significantly less power, like the GT200 series cards do. 40nm should help with that a bit though.

Finally, a small rant: When you think about it, we really haven't seen a new GPU architecture from Nvidia since the G80. I mean, the G90 and G92 are just derivatives of that and they only offer marginally better performance on their own; if you disregard the smaller manufacturing process the prices should even be similar at release. Then even the GT200 series cards, while making great gains in power efficiency, are still based on G92 and STILL only offer marginally better performance than the G92 parts; and worse, they cost a lot to make so they're overpriced for what they offer in performance. I sincerely hope that by the end of this year there has been an official press release and at least review samples sent out of completely new architectures from both AMD and Nvidia. Of course it'd be even better if those parts were released to market some time around November. Those are my thoughts anyway; congrats to you if you actually read through all of this:)

SiliconDoc - Wednesday, March 18, 2009 - link

" It seems to me, if Nvidia wanted to do right by their customers, that they'd just scrap the 1GB GTS250 and offer the GTX260 Core216 at the $150 price point, it has a little less RAM so there's a little savings for them there. But then, that's if they wanted to do the right thing for their customers. "_________________

So, they should just price their cards the way you want them to, with their stock in the tank, to satisfy your need to destroy them ?

Have fun, it would be the LAST nvidia card you could ever purchase. "the right thing for you" - WHAT EVER YOU WANT.

Man, it's just amazing.

Get on the governing board and protect the shareholders with your scheme, would you fella ?

Hrel - Saturday, March 21, 2009 - link

Hey, I know they can't do that. But that's their fault too; they made the GT200 die TOO BIG. I'm just saying, in order for them to compete in the market place well that's what they'd have to do. I DO want them to still make a profit; cause I wanna keep buying their GPU's. It's just that compared to the next card down, that's what the GTX260 is worth, cause it's just BARELY faster; maybe 160. But that's their fault too. The GT200 DIE is probably the WORST Nvidia GPU die EVER made, from a business AND performance standpoint.SiliconDoc - Saturday, March 21, 2009 - link

PS - you do know you're insane, don't you ? The " GT200 is the probably the worst die from a performance standpoint."Yes, you're a red loon rooster freak wacko.

Hrel - Thursday, April 9, 2009 - link

you left out Business standpoint, so I guess you at least concede that GT200 die is bad for business.SiliconDoc - Saturday, March 21, 2009 - link

Now you claqim you know, and now you ADMIT there is no place for it if they did, anyhow. Imagine that, but "you know" - even after all your BLABBERING to the contrary.Now, be aware - Derek has already stated - the 40nm is coming with the GT200 shrunk and INSERTED into the lower bracket.

Maybe he was shooting off his mouth ? I'm sure "you konw" -

( Like heck I am )

Six months from now, or more, and 40nm, will be a different picture.

Hrel - Wednesday, April 1, 2009 - link

seriously, what are you talking about?pretty sure I'm gonna just ignore you from now on; pretty certain you are medically insane!

I'd respond to what you said, I honestly have no idea what you were TRYING to say though.

SiliconDoc - Wednesday, April 8, 2009 - link

You don't need to respond, friend. You blabber out idiocies of your twisted opinion that noone in their right mind could agree with, so its clear you wouldn't know what anyone else is talking about.You whine nvidia made the gt200 core too big, which is merely your stupid opinion.

The g92 core(ddr3) with ddr5 would match the 4870(drr5), which is a 4850(ddr3) core.

So nvidia ALREADY HAS a 4850 killer, already has EVERYTHING the ati team has in that region - AND MORE BECAUSE OF THE ENDLESS "REBRANDING".

But you're just too crewed up to notice it. You want a GT200 that is PATHETIC like the 4830 - a hacked down top core. Well, only ATI can do that, because only their core SUCKS THAT BADLY without ddr5.

NVidia ALREADY HAS DDR3 ON IT.

SHOULD THEY GO TO DDR2 TO MOVE THEIR GT200 CORE DOWN TO YOUR DESIRED LEVEL ?

Now, you probably cannot understand ALL of that either, and being stupid enough to miss it, or so emotionally petrified, isn't MY problem, it's YOURS, and by the way, it CERTAINLY is not NVidia's - they are way ahead of your tinny, sourpussed whine, with aJUST SOME VERY BASIC ELEMENTARY FACTS THAT SHOULD BE CLEAR TO A SIXTH GRADER.

Good lord.

the GT200 chips already have just ddr3 on them mr fuddy duddy, they CANNOT cut em down off ddr5 to make them as crappy as the 4850 or 4830, which BTW is matched by the two years old g80 revised core- right mr rebrand ?

Wow.

Whine whine whine whine.

I bet nvidia people look at that crap and wonder how STUPID you people are. How can you be so stupid ? How is it even possible ? Do the red roosters completely brainwash you ?

I know, you don't understand a word, I have to spell it out explicitly, just the very simple base drooling idiot facts need to be spelled out. Amazing.