ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275

by Anand Lal Shimpi & Derek Wilson on April 2, 2009 12:00 AM EST- Posted in

- GPUs

PhysX in Warmonger: Fail

Cryostasis is a title due out this year, unfortunately there is no playable demo. Just a tech demo. Next.

Metal Knight Zero, MKZ for short, was another game on NVIDIA’s list. Once more, no playable demo, just a tech demo. We need real games here people, real titles, if you’re trying to convince someone to buy NVIDIA on the merits of PhysX.

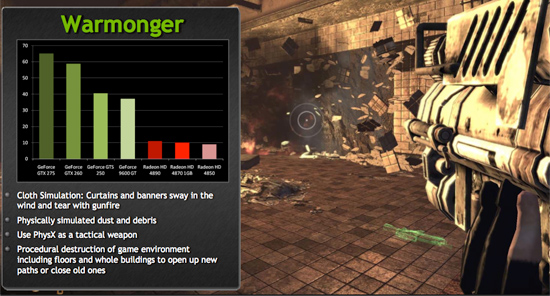

Warmonger, ah yes, now we have a playable game. Warmonger is a first person shooter that uses GPU accelerated PhysX to enable destructible environments. Allow me to quote NVIDIA:

The first thing about Warmonger is that it runs horribly slow on ATI hardware, even with GPU accelerated PhysX disabled. I’m guessing ATI’s developer relations team hasn’t done much to optimize the shaders for Radeon HD hardware. Go figure.

The verdict here (aside from: I don’t want to play Warmonger), was that the GPU accelerated PhysX effects were not very, well, impressive. You could destroy walls, but the game itself wasn’t exactly fun so it didn’t matter. The realistic cloth that you could shoot holes through? Yeah, not terribly realistic looking.

Look at the hyper realistic cloth! Yeah, it looks like a highly advanced game from 6 years ago.

Warmonger itself wasn’t a triple A first person shooter, and the GPU accelerated PhysX effects on top of it weren’t going to make the game any better. Sorry guys, none of us liked this one. PC Gamer gave it a 55/100. Looks like we weren’t alone. Next.

294 Comments

View All Comments

SiliconDoc - Monday, April 6, 2009 - link

Well thanks for stomping the red rooster into the ground, definitively, after proving, once again, that what an idiot blabbering pussbag red spews about without a clue should not be swallowed with lust like a loose girl.I mean it's about time the reds just shut their stupid traps - 6 months of bs and lies will piss any decent human being off. Heck, it pissed off NVidia, and they're paid to not get angry. lol

tamalero - Sunday, April 5, 2009 - link

arggh, lots of typoos."Mirrors Edge's PhysX in other hand does show indeed add a lot of graphical feel. " should have been : Mirrors Edge's physx in other hand, does indeed show a lot of new details.

lk7600 - Friday, April 3, 2009 - link

Can you please die? Prefearbly by getting crushed to death, or by getting your face cut to shreds with a

pocketknife.

I hope that you get curb-stomped, f ucking retard

Shut the *beep* up f aggot, before you get your face bashed in and cut

to ribbons, and your throat slit.

papapapapapapapababy - Saturday, April 4, 2009 - link

Yes, i love you too, silly girl.lk7600 - Friday, April 3, 2009 - link

Can you please remove yourself from the gene pool? Preferably in the most painful and agonizing way possible? Retard

magnetar68 - Thursday, April 2, 2009 - link

Firstly, I agree with the articles basic premise that lack of convincing titles for PhysX/CUDA means this is not a weighted factor for most people.I am not most people, however, and I enjoy running NVIDIA's PhysX and CUDA SDK samples and learning how they work, so I would sacrifice some performance/quality to have access to these features (even spend a little more for them).

The main point I would like to make, however, is that I like the fact that NVIDIA is out there pushing these capabilities. Yes, until we have cross-platform OpenCL, physics and GPGPU apps will not be ubiquitous; but NVIDIA is working with developers to push these capabilities (and 3D Stereo with 3D VISION) and this is pulling the broader market to head in this direction. I think that vision/leadership is a great thing and therefore I buy NVIDIA GPUs.

I realize that ATI was pushing physics with Havok and GPGPU programming early (I think before NVIDIA), but NVIDIA has done a better job of executing on these technologies (you don't get credit for thinking about it, you get credit for doing it).

The reality is that games will be way cooler when the you extrapolate from Mirror's Edge to what will be around down the road. Without companies like NVIDIA out there making solid progress on executing on delivering these capabilities, we will never get there. That has value to me I am willing to pay a little for. Having said that, performance has to be reasonable close for this to be true.

JarredWalton - Thursday, April 2, 2009 - link

Games will be better when we get better effects, and PhysX has some potential to do that. However, the past is a clear indication that developers aren't going to fully support PhysX until it works on every mainstream card out there. Pretty much it means NVIDIA pays people to add PhysX support (either in hardware or other ways), and OpenCL is what will really create an installed user base for that sort of functionality.If you're a dev, what would you rather do: work on separate code paths for CUDA and PhysX and forget about all other GPUs, or wait for OpenCL and support all GPUs with one code path? Look at the number of "DX10.1" titles for a good indication.

josh6079 - Thursday, April 2, 2009 - link

Agreed.NVidia has certainly received credit for getting accelerated physics moving, but its momentum stops when they couple it to CUDA when offering it to discrete graphics cards outside of the GeForce family.

Hrel - Thursday, April 2, 2009 - link

Still no 3D Mark scores, STILL no low-med resolutions.Thanks for including the HD4850, where's the GTS250??? Or do you guys still not have one? Well, you could always use a 9800GTX+ instead, and actually label it correctly this time. Anyway, thanks for the review and all the info on CUDA and PhysX; pretty much just confirmed what I already knew; none of it matters until it's cross-platform.

7Enigma - Friday, April 3, 2009 - link

3DMark can be found in just about every other review. I personally don't care, but realize people compete on the Orb, and since it's just a simple benchmark to run it probably could be included without much work. The only problem I see (and agree with) is the highly optimized nature both Nvidia and ATI put on the PCVantage/3DMark benchmarks. They don't really tell you much about anything IMO. I'd argue they not only don't tell you about future games (since to my knowledge no (one?) games have ever used an engine from the benchmarks), nor do they tell you much between cards from different brands since they look for every opportunity to tweak them for the highest score, regardless of whether it has any effect in realworld performance.What low-med resolution are you asking for? 1280X1024 is the only one I'd like to see (as that's what I and probably 25-50% of all gamers are still using), but I can see why in most cases they don't test it (you have to go to low end cards to have an issue with playable framerates on anything 4850 and above at that resolution). Xbitlabs' review did include 1280X1024, but as you'll see, unless you are playing Crysis:Warhead, and to a lesser extent Farcry2 with max graphics settings and high levels of AA you are normally in the high double to triple digits in terms of framerate. Any resolution lower than that, you've got to be on integrated video to care about!