ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275

by Anand Lal Shimpi & Derek Wilson on April 2, 2009 12:00 AM EST- Posted in

- GPUs

The Latest CUDA App: MotionDSP’s vReveal

NVIDIA had more slides in its GTX 275 presentation about non-gaming applications than it did about how the 275 performed in games. One such application is MotionDSP’s vReveal - a CUDA enabled video post processing application than can clean up poorly recorded video.

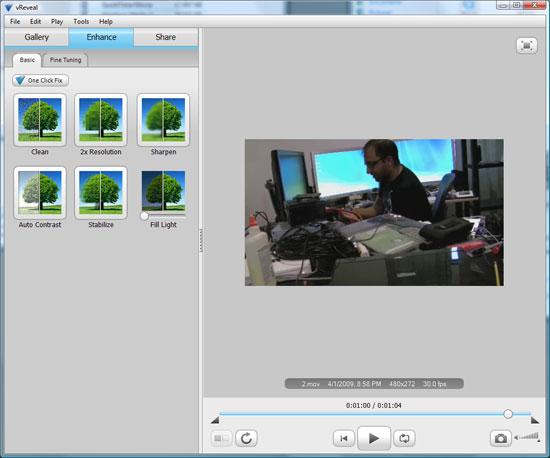

The application’s interface is simple:

Import your videos (anything with a supported codec on your system pretty much) and then select enhance.

You can auto-enhance with a single click (super useful) or even go in and tweak individual sliders and settings on your own in the advanced mode.

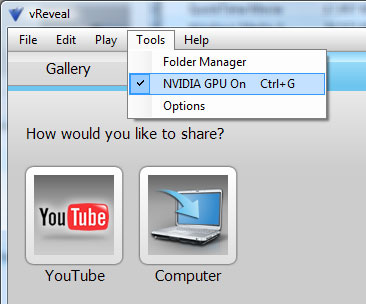

The changes you make to the video are visible on the fly, but the real time preview is faster on a NVIDIA GPU than if you rely on the CPU alone.

When you’re all done, simply hit save to disk and the video will be re-encoded with the proper changes. The encoding process takes place entirely on the GPU but it can also work on a CPU.

First let’s look at the end results. We took three videos, one recorded using Derek’s wife’s Blackberry and two from me on a Canon HD cam (but at low res) in my office.

I relied on vReveal’s auto tune to fix the videos and I’ve posted the originals and vReveal versions on YouTube. The videos are below:

In every single instance, the resulting video looks better. While it’s not quite the technology you see in shows like 24, it does make your videos look better and it does do it pretty quickly. There’s no real support for video editing here and I’m not familiar enough with the post processing software market to say whether or not there are better alternatives, but vReveal does do what it says it does. And it uses the GPU.

Performance is also very good on even a reasonably priced GPU. It took 51 seconds for the GeForce GTX 260 to save the first test video, it took my Dell Studio XPS 435’s Core i7 920 just over 3 minutes to do the same task.

It’s a neat application. It works as advertised, but it only works on NVIDIA hardware. Will it make me want to buy a NVIDIA GPU over an ATI one? Nope. If all things are equal (price, power and gaming performance) then perhaps. But if ATI provides a better gaming experience, I don’t believe it’s compelling enough.

First, the software isn’t free - it’s an added expense. Badaboom costs $30, vReveal costs $50. It’s not the most expensive software in the world, but it’s not free.

And secondly, what happens if your next GPU isn’t from NVIDIA? While vReveal will continue to work, you no longer get GPU acceleration. A vReveal-like app written in OpenCL will work on all three vendors’ hardware, as long as they support OpenCL.

If NVIDIA really wants to take care of its customers, it can start by giving away vReveal (and Badaboom) to people who purchase these high end graphics cards. If you want to add value, don’t tell users that they should want these things, give it to them. The burden of proof is on NVIDIA to show that these CUDA enabled applications are worth supporting rather than waiting for cross-vendor OpenCL versions.

Do you feel any differently?

294 Comments

View All Comments

7Enigma - Thursday, April 2, 2009 - link

And just go and disregard everything I typed (minus the different driver versions). Xbit apparently underclocked the 4890 to stock speeds. So I have no clue how the heck their numbers are so significantly different, except they have this posted on system settings:ATI Catalyst:

Smoothvision HD: Anti-Aliasing: Use application settings/Box Filter

Catalyst A.I.: Standard

Mipmap Detail Level: High Quality

Wait for vertical refresh: Always Off

Enable Adaptive Anti-Aliasing: On/Quality

Other settings: default

Nvidia GeForce:

Texture filtering – Quality: High quality

Texture filtering – Trilinear optimization: Off

Texture filtering – Anisotropic sample optimization: Off

Vertical sync: Force off

Antialiasing - Gamma correction: On

Antialiasing - Transparency: Multisampling

Multi-display mixed-GPU acceleration: Multiple display performance mode

Set PhysX GPU acceleration: Enabled

Other settings: default

If those are set differently in Anand's review I'm sure you could get some weird results.

SiliconDoc - Monday, April 6, 2009 - link

LOL - set PhysX gpu accelleration enabled.roflmao

Yeah man, I'm gonna get me that red card... ( if you didn't detect sarcasm, forget it)

tamalero - Thursday, April 9, 2009 - link

good to know you blame everyone for "bad reading understanding"let's see

ATI Catalyst:

Smoothvision HD: Anti-Aliasing: Use application settings/Box Filter

Catalyst A.I.: Standard

Mipmap Detail Level: High Quality

Wait for vertical refresh: Always Off

Enable Adaptive Anti-Aliasing: On/Quality

Other settings: default

Nvidia GeForce:

Texture filtering – Quality: High quality

Texture filtering – Trilinear optimization: Off

you see the big "NVIDIA GEFORCE:" right below "other settings"?

that means the physX was ENABLED on the GEFORCE CARD.

you sir, are a nvidia fanboy and a big douché

SiliconDoc - Thursday, April 23, 2009 - link

More personal attacks, when YOU are the one who can't read, you IDIOT.Here are my first two lines: LOL - set PhysX gpu accelleration enabled.

roflmao

_____

Then you tell me it says PhySx is enabled - which is what I pointed out. You probably did not go see the linked test results at the other site, and put two and two together.

Look in the mirror and see who can't read, YOU FOOL.

Better luck next time crowing barnyard animal.

"Cluckle red 'el doo ! Cluckle red 'ell doo !"

Let's see, I say PhySx is enabled, and you scream at me to point out it says PhysX is enabled, and call me an nvidia fan because of it - which would make you an nvidia fan as well - according to you, IF you knew what the heck you were doing, which YOU DON'T.

That makes you - likely a red rooster... I may check on that - hopefully you're not a noob poster, too, as that would reduce my probabilities in the discovery phase. Good luck, you'll likely need it after what I've seen so far.

7Enigma - Thursday, April 2, 2009 - link

Looked even closer and the drivers used were different.ATI Drivers:

Anand-9.4 beta

Xbit-9.3

Nvidia:

Anand-185

Xbit-182.08

ancient46 - Thursday, April 2, 2009 - link

I don't see the fun in shooting cloth and unrealistic non impact resistant windows in high rise buildings. The video with the cloth was distracting, it made me wonder why it was there. What was its purpose? My senior eyes did not see much of an improvement in the videos in the CUDA application.SiliconDoc - Monday, April 6, 2009 - link

Maybe someday you'll lose you're raging red fanboy bias, brakdown entirely, toss out your life religion, and buy an nvidia card. At that point perhaps Mirror's Edge will come with it, and after digging it out of the trash can (second thoughts you had), you'll try it, and like anand, really like it - turn it off, notice what you've been missing, turn it back on, and enjoy. Then after all that, you can crow "meh".I suppose after that you can revert to red rooster raging fanboy - you'll have to have your best red bud rip you from your Mirror's Edge addiction, but that's ok, he's a red and will probably smack you for trying it out - and have a clean shot with ow absorbed you'll be.

Well, that should rap it up.

poohbear - Thursday, April 2, 2009 - link

are the driver issues for AMD that significant that it needs to be mentioned in a review article? im asking in all honesty as i dont know. Also, this close developer relationship nvidia has w/ developers. does that show up in any games to significantly give a performance edge for nvidia vid cards? is there an example game out there for this? thanks.7Enigma - Thursday, April 2, 2009 - link

Look no further than this article. :) Here's the quote:"The first thing about Warmonger is that it runs horribly slow on ATI hardware, even with GPU accelerated PhysX disabled. I’m guessing ATI’s developer relations team hasn’t done much to optimize the shaders for Radeon HD hardware. Go figure."

But ATI also has some relations with developers that show an unusually high advantage as well (Race Driver G.R.I.D. for example). All in all, as long as no one is cheating by disabling effects or screwing with draw distances, it only benefits the consumer for the games to be optimized. The more one side pushes for optimizations, the more the other side is forced, or risk losing the benchmark wars (which ultimately decides purchases for most people).

SkullOne - Thursday, April 2, 2009 - link

In the conclusion mentions Nvidia's partners releasing OC boards but nothing about AMD. There is already two versions of the XFX HD4890 on Newegg. One is 850 core and the other is 875 core.The HD4890 is geared to open that SKU of "OC" cards for AMD. People with stock cooling and stock voltage can already push the card to 950+MHz. On the ASUS card you boost voltage to the GPU which has allowed people to get over 1GHz on their GPU. As the card matures seeing 1GHz cores on stock cooling and voltage will become a reality.

It seems like these facts are being ignored.