ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275

by Anand Lal Shimpi & Derek Wilson on April 2, 2009 12:00 AM EST- Posted in

- GPUs

New Drivers From NVIDIA Change The Landscape

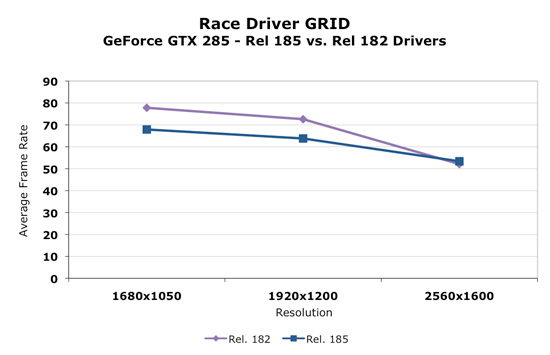

Today, NVIDIA will release it's new 185 series driver. This driver not only enables support for the GTX 275, but affects performance in parts across NVIDIA's lineup in a good number of games. We retested our NVIDIA cards with the 185 driver and saw some very interesting results. For example, take a look at before and after performance with Race Driver: GRID.

As we can clearly see, in the cards we tested, performance decreased at lower resolutions and increased at 2560x1600. This seemed to be the biggest example, but we saw flattened resolution scaling in most of the games we tested. This definitely could affect the competitiveness of the part depending on whether we are looking at low or high resolutions.

Some trade off was made to improve performance at ultra high resolutions at the expense of performance at lower resolutions. It could be a simple thing like creating more driver overhead (and more CPU limitation) to something much more complex. We haven't been told exactly what creates this situation though. With higher end hardware, this decision makes sense as resolutions lower than 2560x1600 tend to perform fine. 2560x1600 is more GPU limited and could benefit from a boost in most games.

Significantly different resolution scaling characteristics can be appealing to different users. An AMD card might look better at one resolution, while the NVIDIA card could come out on top with another. In general, we think these changes make sense, but it might be nicer if the driver automatically figured out what approach was best based on the hardware and resolution running (and thus didn't degrade performance at lower resolutions).

In addition to the performance changes, we see the addition of a new feature. In the past we've seen the addition of filtering techniques, optimizations, and even dynamic manipulation of geometry to the driver. Some features have stuck and some just faded away. One of the most popular additions to the driver was the ability to force Full Screen Antialiasing (FSAA) enabling smoother edges in games. This features was more important at a time when most games didn't have an in-game way to enable AA. The driver took over and implemented AA even on games that didn't offer an option to adjust it. Today the opposite is true and most games allow us to enable and adjust AA.

Now we have the ability to enable a feature, which isn't available natively in many games, that could either be loved or hated. You tell us which.

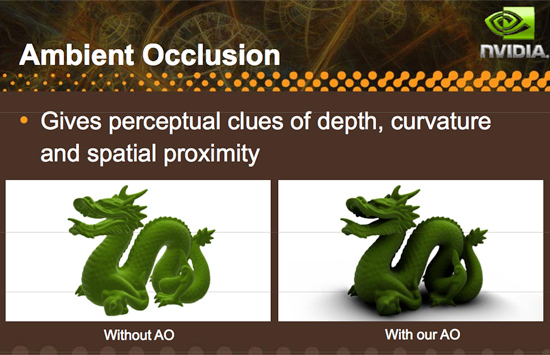

Introducing driver enabled Ambient Occlusion.

What is Ambient Occlusion you ask? Well, look into a corner or around trim or anywhere that looks concave in general. These areas will be a bit darker than the surrounding areas (depending on the depth and other factors), and NVIDIA has included a way to simulate this effect in it's 185 series driver. Here is an example of what AO can do:

Here's an example of what AO generally looks like in games:

This, as with other driver enabled features, significantly impacts performance and might not be able to run on all games or at all resolutions. Ambient Occlusion may be something some gamers like and some do not depending on the visual impact it has on a specific game or if performance remains acceptable. There are already games that make use of ambient occlusion, and some games that NVIDIA hasn't been able to implement AO on.

There are different methods to enable the rendering of an ambient occlusion effect, and NVIDIA implements a technique called Horizon Based Ambient Occlusion (HBAO for short). The advantage is that this method is likely very highly optimized to run well on NVIDIA hardware, but on the down side, developers limit the ultimate quality and technique used for AO if they leave it to NVIDIA to handle. On top of that, if a developer wants to guarantee that the feature work for everyone, they would need implement it themselves as AMD doesn't offer a parallel solution in their drivers (in spite of the fact that they are easily capable of running AO shaders).

We haven't done extensive testing with this feature yet, either looking for quality or performance. Only time will tell if this addition ends up being gimmicky or really hits home with gamers. And if more developers create games that natively support the feature we wouldn't even need the option. But it is always nice to have something new and unique to play around with, and we are happy to see NVIDIA pushing effects in games forward by all means possible even to the point of including effects like this in their driver.

In our opinion, lighting effects like this belong in engine and game code rather than the driver, but until that happens it's always great to have an alternative. We wouldn't think it a bad idea if AMD picked up on this and did it too, but whether it is more worth it to do this or spend that energy encouraging developers to adopt this and comparable techniques for more complex writing is totally up to AMD. And we wouldn't fault them either way.

294 Comments

View All Comments

SiliconDoc - Monday, April 6, 2009 - link

So he just told you why he's gettting NVidia, and the little red fanboy in you couldn't stand it. You recommend taking steps that void the warranty, but why should you care - then you blabber about a 4850, but he already noted the 260 - and finally you let the last word in you could barely bring your red rooster self to say GTS250 - as in you might as well get it.... if you have to - 'cept he was already looking above that.You red ragers just have to spew about your crap card to people who already seemingly decided they don't want it. Why is that ?

You gonna offer him red cuda, red physx, red vreveal, red badaboom, red forced game profiles, red forced dual gpu ? ANY OF THAT ?

NO -

you tell him to HACK a red piece of crap to make it reasonable. LOL

What a shame.

Hey, maybe he can hack the buzzing fan on it , too ?

helldrell666 - Monday, April 6, 2009 - link

What the hell are you talking about?Im not a fan boy of any of those big companies that don't give a shit about me.

I have used both nvidia and ATI, and both produce great graphics cards.

I had a 8800gtx before my current 4870, and i had and athlon x2 6400+ before my current core i7 920.I go with whoever has the product with the best price.

SiliconDoc - Monday, April 6, 2009 - link

No, you are a fanboy and you are exposed. Deal with it.helldrell666 - Monday, April 6, 2009 - link

It's you, who the fanboy is.You're trying hard to show the advantages of nvidia cards over the ATI cards, and guess what, you fail.And i don't give a rat ass about CUDA.Im a gamer, and what matters to me is the card's gaming performance and the features that enhance my my gaming experience "which is what 99.9% of who buy those cards care for", Like physx, which is a true con for nvidia cards.But physx isn't supported on most games, and the physx effects that nvidia is trying to promote can be easily processed on a decent sub 300$ cpu by enahncing the on cpu physx performance via a driver/software that could utilize all cpu cores and utiliz the cpu processing power in a better way.

Ohh wait.ATI has DX10.1 and tessellation which nvidia doesn't have, and thanks to your nvidia, we didn't get much games that support

DX10.1, and we didn't get any game that supports tessellation which is a geometry accelerating technique that can accelerate geometry processing by up to 4 times using the same amount of floating points aka. processing power.If tessellation, which is included in ATI cards APIs since the Hd2000 series days, was used in those demanding games like crysis and stalker, we would've been able to play them using sub 300$ graphics solutions.Put aside the DX10.1 features like the aa enhancement.....and the GRS color detector that allows the gpu to use more accurate color degree for a texel using a more advanced texturing algorithm compared to the tri/bi-linear buffering teqnique used in nvidia's illegal uncompleted DX10 API.

SiliconDoc - Monday, April 6, 2009 - link

rofl - the long list of your imaginary hatreds against nvidia - you FREAK fanboy.The problem being, that just like some lying retard, you blame ati's epic failure with tessalation on who ? ROFL NVIDIA.

Sorry bub, you're another one who is INSANE.

You won't face what IS - you want something that isn't - wasn't - or won't be - so keep on WHINING forever, looney tuner.

In the mean time, nvidia users outnumber you, enjoy the large amount of added benefits, and don't have a 2 billion dollar loss hanging over their heads - with a company that might collapse in bankruptcy - and lose support of the already problematci drivers.

You bought the wrong thing, now you have a hundred could be would be if and an's and garbage can complaints that have nothing to do with reality and how it actually is.

Fantasy red rooster fanboy.

LOL - it's amazing.

helldrell666 - Tuesday, April 7, 2009 - link

Freak fanboy......? Hatred....? Are you serious...or ....?I don't hate any company because i have no reason to.I blame nvidia for not allowing those few developed games companies to include those great features in these very few modern demanding games.

Accelerating physx processing using the gpu is a great idea, but is it worth it?

Is the cpu realy unable to keep up with the gpu in games due to it's slow physx processing ability?

Are those primitive physx effects realy that heavy n a modern quad core cpu?

These are questions that you should ask yourself before trolling for the idea.

And i have to remind you that ATI has coded Havok physx effects in

OPEN-CL programming language, which in case you don't know, is standard langauage compared to nvidia CUDA, which is based on some kind of c programming codes.

Talking about the drivers, i haven't had problems with my 4870 on MY Vista 64bit OS, compared to my old 8800gtx that almost brought me a heart attack.

As for your beloved nvidia, we need nvidia as much as we need AMD and INTEL to keep the competetition alive, which in its turn will keep innovations going and adjust the prices.

Ohh... and thanx to the red camp, we can get a decent graphics card for less than 300$.so have some respect for them.

as for you, i wonder how old you are, cuz you don't seem to have a mature logic.

tamalero - Monday, April 20, 2009 - link

dont worry, this guy clearly as mental issues, a nvidia paid troll.SiliconDoc - Tuesday, April 7, 2009 - link

Yeah, sure. Just like any red rooster, you never had a problem with ati drivers, but nvidia drove you nearly to a heart attack, but I have problems with logic or detecting a red rooster fanboy blabberer ! ROFLDude, you keep digging your own hole deeper.

Then you try the immature assault - another losing proposition.

YOU'RE WHINING about nvidia and yeah, you do blame them, and after going on like a lunatic about wanting offload to the cpu, you admit it might not work - yes I read your asinine quadcore offload blabberings, too, and your bloody ragging about nvidia "not letting" your insane fantasy occur - purportedly to advantage ati (not like you're banking on intel graphics).

So red rooster, keep crowing, and never face the reality that is, and carry that chip for all your imiginary grievances of what should be or, what you say could have been.

In the mean time, know you are marked, and I know who and what you are, and I'm sure you'll have further whining and wailing about what nvidia did or didn't do for ati. LOL

roflmao

Logic ? ROFLMAO

" I, almost had a heart attack " said the sissy. lol " But I'm an objective person with logic ". roflmao

Jamahl - Monday, April 6, 2009 - link

Wow what a stunning pile of crap i've just read.ATI's can fold, guess what it's downloaded via CCC.

ATI has open source cloth physx, stream and avivo which pisses all over that trash nvidia call 'purevideo' or whatever.

But best of all, you can ACTUALLY BUY A 4890 whereas the 275 only exists in the Nvidia fanbois tiny little green with envy minds.

The0ne - Tuesday, April 7, 2009 - link

If you haven't noticed, SiliconDoc is basically ignored in all his responses. Be wise and do that same. He'll eventually kill himself :)