ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275

by Anand Lal Shimpi & Derek Wilson on April 2, 2009 12:00 AM EST- Posted in

- GPUs

The Widespread Support Fallacy

NVIDIA acquired Ageia, they were the guys who wanted to sell you another card to put in your system to accelerate game physics - the PPU. That idea didn’t go over too well. For starters, no one wanted another *PU in their machine. And secondly, there were no compelling titles that required it. At best we saw mediocre games with mildly interesting physics support, or decent games with uninteresting physics enhancements.

Ageia’s true strength wasn’t in its PPU chip design, many companies could do that. What Ageia did that was quite smart was it acquired an up and coming game physics API, polished it up, and gave it away for free to developers. The physics engine was called PhysX.

Developers can use PhysX, for free, in their games. There are no strings attached, no licensing fees, nothing. Now if the developer wants support, there are fees of course but it’s a great way of cutting down development costs. The physics engine in a game is responsible for all modeling of newtonian forces within the game; the engine determines how objects collide, how gravity works, etc...

If developers wanted to, they could enable PPU accelerated physics in their games and do some cool effects. Very few developers wanted to because there was no real install base of Ageia cards and Ageia wasn’t large enough to convince the major players to do anything.

PhysX, being free, was of course widely adopted. When NVIDIA purchased Ageia what they really bought was the PhysX business.

NVIDIA continued offering PhysX for free, but it killed off the PPU business. Instead, NVIDIA worked to port PhysX to CUDA so that it could run on its GPUs. The same catch 22 from before existed: developers didn’t have to include GPU accelerated physics and most don’t because they don’t like alienating their non-NVIDIA users. It’s all about hitting the largest audience and not everyone can run GPU accelerated PhysX, so most developers don’t use that aspect of the engine.

Then we have NVIDIA publishing slides like this:

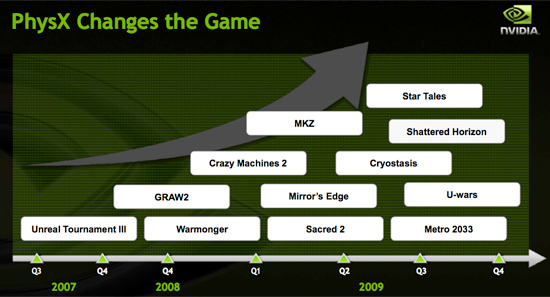

Indeed, PhysX is one of the world’s most popular physics APIs - but that does not mean that developers choose to accelerate PhysX on the GPU. Most don’t. The next slide paints a clearer picture:

These are the biggest titles NVIDIA has with GPU accelerated PhysX support today. That’s 12 titles, three of which are big ones, most of the rest, well, I won’t go there.

A free physics API is great, and all indicators point to PhysX being liked by developers.

The next several slides in NVIDIA’s presentation go into detail about how GPU accelerated PhysX is used in these titles and how poorly ATI performs when GPU accelerated PhysX is enabled (because ATI can’t run CUDA code on its GPUs, the GPU-friendly code must run on the CPU instead).

We normally hold manufacturers accountable to their performance claims, well it was about time we did something about these other claims - shall we?

Our goal was simple: we wanted to know if GPU accelerated PhysX effects in these titles was useful. And if it was, would it be enough to make us pick a NVIDIA GPU over an ATI one if the ATI GPU was faster.

To accomplish this I had to bring in an outsider. Someone who hadn’t been subjected to the same NVIDIA marketing that Derek and I had. I wanted someone impartial.

Meet Ben:

I met Ben in middle school and we’ve been friends ever since. He’s a gamer of the truest form. He generally just wants to come over to my office and game while I work. The relationship is rarely harmful; I have access to lots of hardware (both PC and console) and games, and he likes to play them. He plays while I work and isn't very distracting (except when he's hungry).

These past few weeks I’ve been far too busy for even Ben’s quiet gaming in the office. First there were SSDs, then GDC and then this article. But when I needed someone to play a bunch of games and tell me if he noticed GPU accelerated PhysX, Ben was the right guy for the job.

I grabbed a Dell Studio XPS I’d been working on for a while. It’s a good little system, the first sub-$1000 Core i7 machine in fact ($799 gets you a Core i7-920 and 3GB of memory). It performs similarly to my Core i7 testbeds so if you’re looking to jump on the i7 bandwagon but don’t feel like building a machine, the Dell is an alternative.

I also setup its bigger brother, the Studio XPS 435. Personally I prefer this machine, it’s larger than the regular Studio XPS, albeit more expensive. The larger chassis makes working inside the case and upgrading the graphics card a bit more pleasant.

My machine of choice, I couldn't let Ben have the faster computer.

Both of these systems shipped with ATI graphics, obviously that wasn’t going to work. I decided to pick midrange cards to work with: a GeForce GTS 250 and a GeForce GTX 260.

294 Comments

View All Comments

SiliconDoc - Tuesday, April 7, 2009 - link

Another red rooster who cannot argue with the facts and the truth, and doesn't want them known.Perhaps you'd notice, I didn't comment right away when the STORIED review came out, you FOOL.

I came days later, and made my comments after you had your bs fest of lies, so I don't expect a lot of responders, you DUMMY.

But you're here, and your response is calling for DEATH.

Now, if anyone needs to be banned, YOU DO.

Futhermore, I really don't care if you're here, and have enjoyed some of your posts, but the fact remains, where I have absolutely FACTUALLY retued your BS in some of your posts, you have no response - other than, your own personal rage.

I'll be glad to see how you can defend yourself, but you obviously cannot.

Go ahead, there's 22 pages, and I've pointed out your lies several times. Have at it. Good luck, just calling for DEATH, and spewing "ban him!" while carrying your torch of lies is just what I expect from someone who doesn't care what bs they spew.

You already claimed you can't understand - LOL - of course you can't, you'd have to straighten out yourself and your lies then.

Good luck doing that.

SiliconDoc - Monday, April 6, 2009 - link

LOL - the folding was crap forever on ati, and now it's slower.We know the release date for both cards, and the nvidia is already listed on the egg dude.

When you're a raging red rooster, nothing matters to you but lying for the 2 billion dollar loser - ati.

sidk47 - Friday, April 3, 2009 - link

You cannot argue with facts and the fact of the matter is that you can't help the world find a cure for cancer or Alzheimer's by buying an ATI!So those of you with an Internet connection, should buy an NVidia and fold@home all the time to help make the world a better place!

Take that ATI and your associated fanboys!

x86 64 - Sunday, April 5, 2009 - link

Folding at home is a total waste and is just an excuse to be smug and think you're special, so there to both of you."Oh I'm going to save the world by buying overpriced hardware and letting some university use it for studying the human genome. I'm such a humanitarian."

Please, you can justify your over indulgence any way you want but it still doesn't cover up the fact that you're trying to justify sitting on your asses instead of doing some real community work to help change the world.

Folding@home = Too fat and too lazy to really make an effort.

SiliconDoc - Monday, April 6, 2009 - link

Uhh, dude, they're doing it at college, on like triple TESLA machines with the "supercomputer" motherboards - so you know, go get an education and start whining about unbelievable game framerates - that's what's really going on -Professor cuda machine checker " What happened ? "

Gamer students " Oh, uhh, well it crashed again it was a Crysis, I mean uh, no crisis, last night and it took us about 5 hours to to reset the awesome TESLA cards. We'll come in tonight to keep an eye on it, and clean up the pizza boxes and lock up again professor."

" Very well."

WHO LOVES THE EDUCATION OF AMERICA? !!!

hahahahaha

LeonRa - Saturday, April 4, 2009 - link

Well, since you cannot argue with facts, it's a fact you are a stupid fanboy who doesn't know anything! Check your facts before you post something like that. It is a fact that you can do f@h with an ATI card, as I have been doing it for some time now. So STFU and go spill your hatred somewhere else!SiliconDoc - Tuesday, April 7, 2009 - link

You're not being honest there. A while back ati either couldn't do it all ( no port ) - or it was so pathetic - they had to make a new port - I know they did the latter, and as far having a long stretch where it wasn't available, or just not used much since it was so pathetically slow in compariosn, the fella has the right idea.Furthermore, unless something has recently changed significantly, the ati port is still WAY slower than the Nvidia for folding.

So anyway, nice try, but telling the truth might actually be something the red rooster crew should start practicing .... or perhaps not, considering lying a whole heckuva lot might make those 2 billion dollar ati loses into "sales" that make "overall a profit" a reality...

On the other hand, if people continuously notice the lying by the red fans, they might gravitate to the competition, for obvious reasons.

So, honesty, or more bs ? I think I know what you'll choose.

marraco - Friday, April 3, 2009 - link

I hope to see benchmarks with ATI in charge of graphics, and a Geforce in charge of PhysX.... kind of SLI/crossfire betwen ATIs and Geforces :)

A value-added of the geforces, is that, once you buy a new card, the old can unload Physics from the new card. Nice. I hate wasting old hardare.

On other side, most of the games on PhysX nvidia list don't relly work with GPGPU PhysX. Only with the old AGEIA cards.

Sadly, Crisys and Far Cry don't use PhysX. Only Havoc. And AMD still don't support it in hardware.

spinportal - Friday, April 3, 2009 - link

No mention of the death of the HD 4850X2 as the HD4890 trashes the power consumption, price, availability, speed and OC-ability. No mention of advantage of DX10.1 and the games available. Hey, even bad news is good news sometimes by spotlighting. What is really missing is the bang for buck quality (bucks spent for performance increase), and talk about price depression for the HD 4870 1GB model by 10$ to 15$ with $50 step increments.4850 (125)[20.9] 4870 (185)[27.9] 4890 (235)[31.7]

4870X2 (400)[35.0]

Nvidia is cramping its own style:

250 (150)[21.8] 260-216-55 (180)[27] 275 (250?)[31.3]

280 (290)[30.9] 285 (340)[32.8]

The GTX280 is dead now, overpriced for those trying to sneak into SLI. The GTX260 is overlapped with Core216 55nm you'd want to get, but Joe Consumer might mistakenly get the other 2 prior versions to clean out old inventory. The GTX285's price is not justified but more power to nVidia if they get the consumer's buck.

Gladly, by the low temps the dual slot blowback is voiding hot air properly so the vendors are finally manufacturing cards with common sense.

Too bad we have gone the way with power hungry beastly cards needing two 6-pins.

Also, too bad the effects of AF and 0x00, 2xAA, 4xAA and 8xMSAA modes are not investigated. It would be interesting to see how saturated the units get as AF and AA gets bumped and what are the best modes for nVidia and AMD.

Oh, nice blurb for nVidia's shadow enhancement, but ATi/AMD's tesselation enhancement is as much as a hit or miss feature. Will AMD have an tech edge when DX11 tesselation cometh?

SiliconDoc - Monday, April 6, 2009 - link

Hmm, that said, Derek might be crying, since he couldn't stop crowing about that 4850x2 last review - oh boy, you know - I guess he had the heads up and ati told him what card he needed to help push...You know how things are.

Anyway, good observation.