What are Double Buffering, vsync and Triple Buffering?

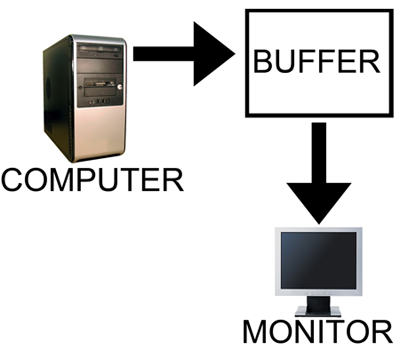

When a computer needs to display something on a monitor, it draws a picture of what the screen is supposed to look like and sends this picture (which we will call a buffer) out to the monitor. In the old days there was only one buffer and it was continually being both drawn to and sent to the monitor. There are some advantages to this approach, but there are also very large drawbacks. Most notably, when objects on the display were updated, they would often flicker.

The computer draws in as the contents are sent out.

All illustrations courtesy Laura Wilson.

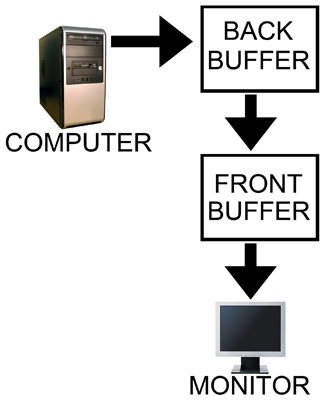

In order to combat the issues with reading from while drawing to the same buffer, double buffering, at a minimum, is employed. The idea behind double buffering is that the computer only draws to one buffer (called the "back" buffer) and sends the other buffer (called the "front" buffer) to the screen. After the computer finishes drawing the back buffer, the program doing the drawing does something called a buffer "swap." This swap doesn't move anything: swap only changes the names of the two buffers: the front buffer becomes the back buffer and the back buffer becomes the front buffer.

Computer draws to the back, monitor is sent the front.

After a buffer swap, the software can start drawing to the new back buffer and the computer sends the new front buffer to the monitor until the next buffer swap happens. And all is well. Well, almost all anyway.

In this form of double buffering, a swap can happen anytime. That means that while the computer is sending data to the monitor, the swap can occur. When this happens, the rest of the screen is drawn according to what the new front buffer contains. If the new front buffer is different enough from the old front buffer, a visual artifact known as "tearing" can be seen. This type of problem can be seen often in high framerate FPS games when whipping around a corner as fast as possible. Because of the quick motion, every frame is very different, when a swap happens during drawing the discrepancy is large and can be distracting.

The most common approach to combat tearing is to wait to swap buffers until the monitor is ready for another image. The monitor is ready after it has fully drawn what was sent to it and the next vertical refresh cycle is about to start. Synchronizing buffer swaps with the Vertical refresh is called vsync.

While enabling vsync does fix tearing, it also sets the internal framerate of the game to, at most, the refresh rate of the monitor (typically 60Hz for most LCD panels). This can hurt performance even if the game doesn't run at 60 frames per second as there will still be artificial delays added to effect synchronization. Performance can be cut nearly in half cases where every frame takes just a little longer than 16.67 ms (1/60th of a second). In such a case, frame rate would drop to 30 FPS despite the fact that the game should run at just under 60 FPS. The elimination of tearing and consistency of framerate, however, do contribute to an added smoothness that double buffering without vsync just can't deliver.

Input lag also becomes more of an issue with vsync enabled. This is because the artificial delay introduced increases the difference between when something actually happened (when the frame was drawn) and when it gets displayed on screen. Input lag always exists (it is impossible to instantaneously draw what is currently happening to the screen), but the trick is to minimize it.

Our options with double buffering are a choice between possible visual problems like tearing without vsync and an artificial delay that can negatively effect both performance and can increase input lag with vsync enabled. But not to worry, there is an option that combines the best of both worlds with no sacrifice in quality or actual performance. That option is triple buffering.

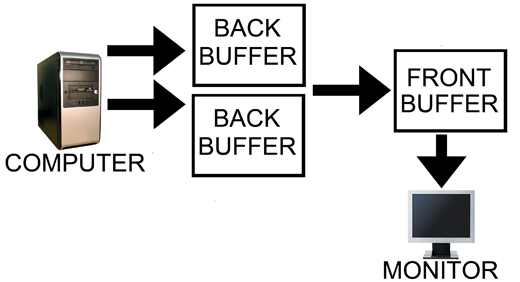

Computer has two back buffers to bounce between while the monitor is sent the front buffer.

The name gives a lot away: triple buffering uses three buffers instead of two. This additional buffer gives the computer enough space to keep a buffer locked while it is being sent to the monitor (to avoid tearing) while also not preventing the software from drawing as fast as it possibly can (even with one locked buffer there are still two that the software can bounce back and forth between). The software draws back and forth between the two back buffers and (at best) once every refresh the front buffer is swapped for the back buffer containing the most recently completed fully rendered frame. This does take up some extra space in memory on the graphics card (about 15 to 25MB), but with modern graphics card dropping at least 512MB on board this extra space is no longer a real issue.

In other words, with triple buffering we get the same high actual performance and similar decreased input lag of a vsync disabled setup while achieving the visual quality and smoothness of leaving vsync enabled.

Now, it is important to note, that when you look at the "frame rate" of a triple buffered game, you will not see the actual "performance." This is because frame counters like FRAPS only count the number of times the front buffer (the one currently being sent to the monitor) is swapped out. In double buffering, this happens with every frame even if the next frames done after the monitor is finished receiving and drawing the current frame (meaning that it might not be displayed at all if another frame is completed before the next refresh). With triple buffering, front buffer swaps only happen at most once per vsync.

The software is still drawing the entire time behind the scenes on the two back buffers when triple buffering. This means that when the front buffer swap happens, unlike with double buffering and vsync, we don't have artificial delay. And unlike with double buffering without vsync, once we start sending a fully rendered frame to the monitor, we don't switch to another frame in the middle.

This last point does bring to bear the one issue with triple buffering. A frame that completes just a tiny bit after the refresh, when double buffering without vsync, will tear near the top and the rest of the frame would carry a bit less lag for most of that refresh than triple buffering which would have to finish drawing the frame it had already started. Even in this case, though, at least part of the frame will be the exact same between the double buffered and triple buffered output and the delay won't be significant, nor will it have any carryover impact on future frames like enabling vsync on double buffering does. And even if you count this as an advantage of double buffering without vsync, the advantage only appears below a potential tear.

Let's help bring the idea home with an example comparison of rendering using each of these three methods.

184 Comments

View All Comments

rna - Sunday, June 28, 2009 - link

From my own fiddling around,Left 4 Dead, "V-sync with Triple Buffering" = Unbearable input lag.

Doom 3 with Triple Buffering forced on in the nVidia control panel and v-sync turned on feels as responsive as with v-sync disabled.

DerekWilson - Wednesday, July 1, 2009 - link

I still haven't confirmed with the developer, but I now think the "triple buffering" that L4D uses is actually a flip queue with 1 frame render ahead (two back buffers; three total buffers).Doom 3 with triple buffering forced in the nvidia control panel with vsync will work exactly as described in this article ...

To double check, I asked NVIDIA for specifics -- triple buffering as forced in their control panel (which only works for OpenGL games) performs exactly the way this article describes that it should.

DerekWilson - Sunday, June 28, 2009 - link

I will do my best to develop a quantitative input lag test. If I can achieve that goal then I will test this and other reported issues.Dospac - Sunday, June 28, 2009 - link

It may be due to Crossfire or ATI's drivers, but enabling vsync and forcing triple buffering with D3Doverrider wrecks the input responsiveness on my system(Vista64 and 3870X2)I used to always play with Vsync and triple buffering when I was on a 120Hz CRT. With a 60Hz LCD, shooters are unplayable. This article is giving inaccurate advice when it states that input lag is not increased.

DerekWilson - Sunday, June 28, 2009 - link

multiGPU options and triple buffering do not play nice together at this point in time.bobjones32 - Sunday, June 28, 2009 - link

I just fired up Left 4 Dead and tested the various vsync options:-vsync disabled

-vsync enabled, double buffering

-vsync enabled, triple buffering

-vsync disabled in game, forced through D3DOverrider with triple buffering

My observations (note - I can retain a perfect 60fps on my 60Hz monitor):

1) triple-buffered vsync still had a noticeable amount of mouse lag

2) double-buffered vsync seemed to have *less* lag, oddly enough

3) There was some odd hitching that took place every second with vsync on, regardless of triple buffering settings.

Oddly enough, mouse lag in Half-Life 2: Episode Two (with either double buffering or triple buffering) was much less noticeable, but that hitching every second was still there.

Derek - any idea why this might be the case?

Scalarscience - Sunday, June 28, 2009 - link

Are you using Crossfire, SLI or a dual gpu card?bobjones32 - Sunday, June 28, 2009 - link

No, single-card 4870 setup.DerekWilson - Wednesday, July 1, 2009 - link

I have no idea why you would see the hitching issue.I do believe my guess about how L4D does it was wrong though: I now think they use a flip queue with three total buffers rather than the technique described in this article.

Ruud van Gaal - Friday, May 25, 2012 - link

One thing I had in my own game with a 1 second hitch was exposure calculation. Mipmapping (through the gfxcard) a single frame down to 1 pixel actually took quite a bit of time and was noticable by a dip in the framerate. Turning off this auto-exposure mipmapping solved it (for me).