Reflexes and Input Generation

Human Reaction Time

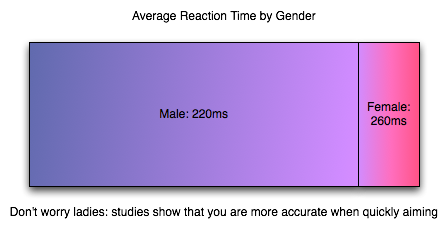

The impact of input lag is compounded by what goes on before we even react. As soon as an image requiring a response hits your eyes, it will take somewhere between 150ms and 300ms to translate that into action. Average human response time to visual stimulus is about 200ms (0.2 seconds) for young adults, which is a long time compared to how quickly games can respond to input. But with this built-in handicap, when fast response to what's happening on screen is required, it is helpful to claim every advantage possible (especially for relative geezers like us).

Human response time is mitigated by the fact that we are also capable of learning, anticipation and extrapolation. In "practicing," a.k.a. playing a game, we can learn to predict future frames from current state for very small time slices to compensate for our response time. Our previous responses to input and the results that followed can also factor in to our future responses. This is part of the learning curve, especially for FPS games. When input lag is below a reasonable threshold, we are able to compensate without issue (and, in fact, do not perceive the input lag at all).

The larger input lag gets, the harder it gets to do something like aim at a moving target. Our expectation of the effect our input should have is different from what we see. This gets into something that combines reaction time and proprioception (reception of self produced stimulus). I'm not a psychologist, but I would love to see some studies done on how much input lag people can compensate for, where it starts to be uncomfortable (where it just "feels" wrong) and when it becomes an obviously visible phenomenon. In digging around the net, I've seen a few game developers conjecture that the threshold is about 100 milliseconds, but I haven't found any actual data on the subject. At the same time, 100 milliseconds (or maybe something like 1/2 reaction time?) seems a pretty reasonable hypothesis to me.

The Input Pipeline

As it is key in most games, we'll examine the case of the mouse when it comes to input. As soon as a mouse is moved, we have a delay. The mouse must begin by detecting this movement. Sorting out how responsive a mouse is these days is incredibly clouded by horrendous terminology. As understanding how a mouse works is important in groking it's impact on input lag, we'll dissect Logitech's specs and try to get some good information on exactly what's going on.

There are three key numbers in the reported specifications of Logitech mice we'll look at: megapixels/second, maximum speed, DPI, and reports/second. For the Logitech G9x high end gaming mouse, this is: 9 MP/s, 150 inches/second, 5000 DPI, and 1000 reports/second. Other gaming and good quality mice can do 500 to 1000 reports/second and have lower DPI and MP/s stats.

The first stat, megapixels/second, is important in how fast the mouse sensor itself can collect movement data. Optical and laser mice detect movement by taking pictures of the surface they are on and comparing the difference in images many times every second. To really understand how fast the mouse takes pictures (and thus how fast it can detect and calculate movement in units called "counts"), we would need to know how many pixels per frame the image is. Our guess is that it can't be larger than 17x17 based on its maximum speed rating (though it might be more like 12x12 if it needs to generate two frames for every count rather than reusing frames from the previous calculation). It'd be great if they listed this data anywhere, but we are left guessing based on other stats at this point.

Next up is DPI, or dots per inch. 5000 for the G9x. DPI is sort of a misrepresentation as the real specification should be in CPI (counts per inch). As it is, the number can be considered maximum DPI if each count moves the cursor one pixel (or dot). Under MS Windows, with no ballistics applied at the default pointer speed, DPI = CPI. Decreasing pointer speed means moving one dot for more than one count, and increasing pointer speed means moving more than one dot for every count. Of course with ballistics, talking about DPI as related to the mouse doesn't make any sense: moving the mouse faster or slower changes the number of dots moved per count dynamically. Because of this, we'll talk about CPI for accuracy sake, and consider that mouse manufacturers intend to use the terms interchangeably (despite the fact that they are not).

CPI is the number of steps the mouse can count within one inch; 1 / CPI inches is the smallest distance in inches the mouse is able to measure as a movement. The full benefit of a high definition mouse is realized when one count is less than or equal to one "dot," which is possible in games (with sensitivity sliders) and in windows if you decrease your mouse speed (though going to something with an odd cadence could cause problems).

Thus, when you tell your 5000 "DPI" mouse to run at 200 "DPI", it would be nice if it still reported 5000 CPI yet and allowed the driver to handle scaling the data down (or performing ballistics on raw data). For this example, we would only move the cursor one dot (one unit on the screen) every 25 counts. But the easy way out is it maintain a 1:1 ratio of counts to dots and drop your actual counts per inch down to 200. This provides no accuracy advantage (though with a fixed sensor speed it does increase maximum velocity and acceleration tolerance). And again it would be helpful if mouse makers could actually tell us what they are doing.

Since the Logitech G9x can do 150 inches/second maximum movement speed at 200 CPI, we know how many counts it must generate per second (though Logitech doesn't make it clear that the maximum speed and acceleration can only happen at the lowest CPI, it only makes sense with the math). The reported specifications indicate that the G9x can do about 30000 counts per second (150 inches in one second at 200 counts per inch). This is consistent with a 9 megapixel/second speed in that such a sensor could collect about 30000 17x17 frames every second based on this data.

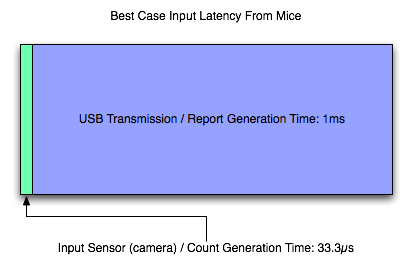

After looking at all that, we can say that our Logitech G9x mouse is capable of detecting movement of between 1/5000th and 1/200th of an inch (depending on the selected CPI) about every 33.3 microseconds (these are 1/1000ths of a millisecond) after the movement happens. That's pretty freaking fast. Other mice can be much slower, but even cutting the speed in half won't affect hugely affect latency (though it will affect the maximum speed at which the mouse can be moved without problem).

Once the mouse has generated a count (or several) we need to send that data to the computer over USB. Counts are aggregated into groups called reports. USB is limited to 1000 Hz polling, so the 1000 reports/second maximum of the G9x makes sense: USB limits the transmission rate here. For those interested, to actually achieve 150 inches per second at 200 CPI, the mouse would need to be able to send about 30 counts per report at 1000 reports per second. This seems reasonable, but it'd be great if someone with USB engineering experience could give us some feedback and let us know for sure.

So, let's say that we've moved our mouse about a couple dozen microseconds before a report is sent. In this case, we've actually got to wait the whole millisecond for that data to be sent to the PC (because the count can't be generated fast enough to be included in the current report). So despite the very fast sensor in the mouse, we are transmission bound and our first "large" delay is on the order of single digit milliseconds. Other mice (like the Logitech G5 I'm using right now) may generate 500 reports per second, while the slowest speed we can expect is 127 reports/second. This can mean a 1ms - 8ms delay in input getting from the motion of the mouse to the computer.

Most gamers use halfway decent mice these days, so we can expect that latency is more like 2ms to 4ms for most wired USB mouse users and 1ms for gamers with higher end mice. This delay can't be cut down to anything less than 1ms until USB 2.0 is replaced by something faster. We'll ignore any cable (or any other wire) delay, as this will only add something on the order of nanoseconds to transmission time.

The input lag from a good mouse, on it's own, is in not perceivable to humans, but remember that this is all part of a larger picture. And now it's on to the software.

85 Comments

View All Comments

aguilpa1 - Thursday, July 16, 2009 - link

Lots of variables that we never consider when trying to do fast gaming. I would be curious how much lag is in a racing sim like GRID or Colin McCrae DIRT. Those are intense graphics games and demand the fastest everything to keep you from going in the ditch. I have noticed I compensate at times by estimating when to start turning before the turn arrives.crimson117 - Thursday, July 16, 2009 - link

Isn't part of that just intentional skidding / drift in racing games, to mimic the "lag" of rubber catching asphalt at high speeds?hechacker1 - Thursday, July 16, 2009 - link

You say TF2 is GPU limited, but with my 4850 I find the first core is pegged at 100%. The same applies to my older 3850.With core i7 920 @ 166x20 = 3320MHz and +166 for Turbo mode, hyper threading on, I see TF2 using 6 cores, The first is pegged out at 100%, the second and third vary from 50-100% depending on the action (32 player server). The other three hover around 25%.

1920x1080. Benq 2400G (bought for its low input-lag)

All highest settings, 4xMSAA, Aniso 8x, Disable vsync, FOV 90

My framerate hovers around 100FPS for most Valve maps.

I use this autoexec to get more threading and higher quality textures:

rlod 0 matpicmip -10 clnewimpacteffects 1 mpusehwmmodels 1 mpusehwmvcds 1 clburninggibs 1 matspecular 1 matparallaxmap 1 rthreadedparticles 1 rthreadedrenderables 1 clragdollcollide "1" jpegquality 100 rthreadedclientshadow_manager 1

Most people say TF2 is a CPU limited game. Perhaps that only applies ATI?

Even without the autoexec.cfg, I see the game use 100% on the first core.

Very good article though. I hope this shuts up the false info that 60fps is too fast for humans to notice.

DerekWilson - Thursday, July 16, 2009 - link

even if a core is pegged at 100% that doesn't mean the game's performance is CPU limited.at 2560x1600 we were hovering around 110fps but at 1152x864 we were constantly well over 200 fps. As lowering resolution doesn't change the load on the CPU, this clearly indicates that we were GPU bound -- at least at 25x16.

For our 1152x864@120Hz test, we might have been CPU bound, but I don't have the data to know for sure here (I didn't test any near resolutions).

hechacker1 - Thursday, July 16, 2009 - link

Oh yeah.Flip queue to 0.

ATI A.I. at Low or "standard" (I've read "high" mode can use more CPU?)

Latest driver. Windows 7 x64 7201.

Qiasfah - Thursday, July 16, 2009 - link

In the article you stated that TF2 was GPU limited (and it was in the situations you were testing), however you should find that in battle situations with other active characters present it becomes heavily CPU limited. It would be interesting to see if there was a difference in input lag due to this in the midst of battles rather than sitting idle.I run an i7 920, and even with multicore enabled (an option which will very commonly double a persons TF2 framerate) i get the same dips in FPS regardless of graphical settings. It would be interesting to see how overclocking affects the performance of this game.

DerekWilson - Thursday, July 16, 2009 - link

More than likely, in TF2, you'll be bottlenecked at the network when it comes to performance ...But the way Valve does things is with local prediction (running code on the client) and then checking predictions on the server. This should mean that our test shows what you can expect to actually /see/ whether or not what actually /happens/ is the same (if you are very laggy on the network or if there are lots of players or whatever).

codestrong - Thursday, July 16, 2009 - link

"Beyond that, GPU is the next most important faster (factor?), and a mouse that can do at least 500 reports per second is a good idea." Nice work by the way. I've been interested in this since Carmack mentioned input lag during his work on quake live.DerekWilson - Thursday, July 16, 2009 - link

yeah, i meant factor. thanks.SiliconDoc - Tuesday, July 21, 2009 - link

Yes, nice article and nice work on getting the job done without a super expensive camera, on an interesting subject for gamers.