Of the GPU and Shading

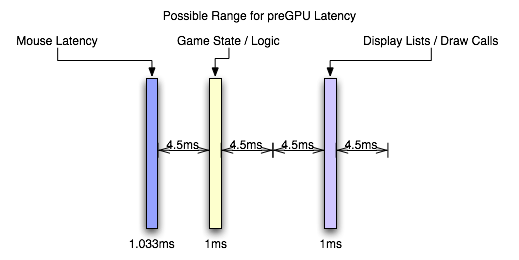

This is my favorite part, really. After the CPU has started sending draw calls to the graphics card, the GPU can begin work on actually rendering the frame containing the input that was generated somewhere in the vicinity of 3ms to 21ms ago depending on the software (and it would be an additional 1ms to 7ms for a slower mouse). Modern, complex, games will tend push up to the long end of that spectrum, while older games (or games that aren't designed to do a lot of realistic simulation like twitch shooters) will have a lower latency.

Again, the actual latency during this stage depends greatly on the complexity of the scene and the techniques used in the game.

These days, geometry processing and vertex shading tend to be pretty fast (geometry shading is slower but less frequently used). With features like instancing and the fact that the majority of detail is introduced via the pixel shader (which is really a fragment shader, but we'll dispense with the nit picking for now). If the use of tessellation catches on after the introduction of DX11, we could see even less actual time spent on geometry as the current level of detail could be achieved with fewer triangles (or we could improve quality with the same load). This step could still take a millisecond or two with modern techniques.

When it comes to actual fragment generation from the geometry data (called rasterization), the fixed function hardware and early z / z culling techniques used make this step pretty fast (yet this can be the limiting factor in how much geometry a GPU can realistically handle per frame).

Most of our time will likely be spent processing pixel shader programs. This is the step where every pin point spot on every triangle that falls behind the area of a screen space pixel (these pin point spots are called fragments) is processed and its color determined. During this step, texture maps are filtered and applied, work is done on those textures based on things like the fragments location, the angle of the underlying triangle to the screen, and constants set for the fragment. Lighting is also part of the pixel shading process.

Lighting tends to be one of the heaviest loads in a heavily loaded portion of the pipeline. Realistic lighting can be very GPU intensive. Getting into the specifics is beyond the scope of this article, but this lighting alone can take a good handful of milliseconds for an entire frame. The rest of the pixel shading process will likely also take multiple milliseconds.

After it's all said and done, with the pixel shader as the bottleneck in modern games, we're looking at something like 6ms to 25ms. In fact, the latency of the pixel shaders can hide a lot of the processing time of other parts of the GPU. For instance, pixel shaders can start executing before all the geometry is processed (pixel shaders are kicked off as fragments start coming out of the rasterizer). The color/z hardware (render outputs, render backends or ROPs depending on what you want to call them) can start processing final pixels in the framebuffer while the pixel shader hardware is still working on the majority of the scene. The only real latency that is added by the geometry/vertex processing portion of the pipeline is the latency that happens before the first pixels begin processing (which isn't huge). The only real latency added by the ROPs is the processing time for the last batch of pixels coming out of the pixel shaders (which is usually not huge unless really complicated blending and stencil technique are used).

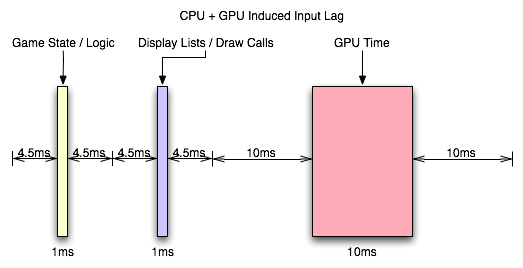

With the pixel shader as the bottleneck, we can expect that the entire GPU pipeline will add somewhere between 10ms and 30ms. This is if we consider that most modern games, at the resolutions people run them, produce something between 33 FPS and 100 FPS.

But wait, you might say, how can our framerate be 33 to 100 FPS if our graphics card latency is between 10ms and 30ms: don't the input and CPU time latencies add to the GPU time to lower framerate?

The answer is no. When we are talking about the total input lag, then yes we do have to add these latencies together to find out how long it has been since our input was gathered. After the GPU, we are up to something between 13ms and 58ms of input lag. But the cool thing is that human response happens in parallel to input gathering which happens in parallel to CPU time spent processing game logic and draw calls (which can happen in parallel to each other on multicore CPUs) which happens in parallel to the GPU rendering frames. There is a sequential path from input to the screen, but we can almost look at this like a heavily pipelined path where each stage operates in parallel on a different upcoming frame.

So we have the GPU rendering the previous frame while simulation and game logic are executing and input is being gathered for the next frame. In this way, the CPU can be ready to send more draw calls to the GPU as soon as the GPU is ready (provided only that we are not CPU limited).

So what happens after the frame is finished? The easy answer is a buffer swap and scanout. The subtle answer is mounds of potential input lag.

85 Comments

View All Comments

mathew7 - Thursday, September 8, 2011 - link

What I realized (i)racing in the past year is that a consistent lag can still offer playable experience when everything is under control (like no tire grip losing in racing). I think this is why consoles seem to get away with laggy TVs. What I mean is that the brain will train itself to anticipate any movement. For instance, there was a comment about turning in earlier. But if the lag is inconsistent (let's say +/- 1 frame, that would mean 32ms between lowest and highest lag), the brain cannot adapt to it, as this is unnatural. FPS stutter is such an inconstistency, as is loading something during play (and game controls freezing for much less than 1s).But even consistent lag will not offer the best performance (I'm talking about lap times, not FPS), because anticipation is just 1 part, reflex is another, and your reflexes are what input lag affects most. During my racing, I found that I can actually recover from most slides with my LCD TV as opposed to my (much newer) LCD monitor. Some tests with a low-res high-speed camcorder (192x108x240fps), cloned displays and fast steering wheel movement showed 2-4 frames lag between TV and monitor. That is 32-64ms. The TV itself had around 1-2 frame lag compared to the wheel, but this included the complete chain of wheel sampling->USB->game CPU->GPU->TV.

junior262133 - Monday, March 4, 2013 - link

Oh sweet baby Jesus thank you for this article. It saddens me knowing that Counter strike Global offensive has horrendous input lag in comparison to counter strike source. This issue has been plaguing me since it was in beta. I would mostly rank first in css when playing, but going over to global offensive was a living nightmare. What baffles me is almost no one seems to notice, considering most of us come from a background of CS:s or 1.6 on a Crt.On top of that nvidia has completely removed the option to set pre-rendered frames to zero.

i have tried submitting tickets in to patch cs:go but they seem to not be getting any attention. Maybe i'm talking to the wrong people. If you could please do so, visit these 2 pages in which i try to address the issues at hand. It wouldn't hurt if you could explain in one comment that the reason there is input lag in Cs:go is because of the game code and not some random pc glitch of mine. Most people seem to be oblivious to the problem.

http://64bitvps.com/csgo/ticket/mouse-laginput-mis...

http://64bitvps.com/csgo/ticket/let-us-have-the-op...

THANK YOU SO F*****G MUCH!!!!!

jigglywiggly - Saturday, October 5, 2013 - link

does afr in sli add one frame of input lag?ibaldo - Sunday, October 6, 2013 - link

I am looking for information about possible difference in lag between monitor connections.I mean, comparing VGA vs DVI.

Thanks for this excellent article!

willCho - Tuesday, January 15, 2019 - link

I would like to watch the video, but I can't find it anywhere and can't find it on youtube. Can someone post a link?