AMD’s Radeon HD 5850: The Other Shoe Drops

by Ryan Smith on September 30, 2009 12:00 AM EST- Posted in

- GPUs

Battleforge: The First DX11 Game

As we mentioned in our 5870 review, Electronic Arts pushed out the DX11 update for Battleforge the day before the 5870 launched. As we had already left for Intel’s Fall IDF we were unable to take a look at it at the time, so now we finally have the chance.

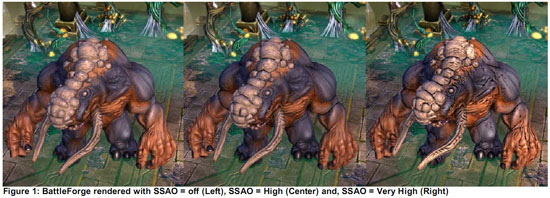

Being the first DX11 title, Battleforge makes very limited use of DX11’s features given that the hardware and the software are still brand-new. The only thing Battleforge uses DX11 for is for Compute Shader 5.0, which replaces the use of pixel shaders for calculating ambient occlusion. Notably, this is not a use that improves the image quality of the game; pixel shaders already do this effect in Battleforge and other games. EA is using the compute shader as a faster way to calculate the ambient occlusion as compared to using a pixel shader.

The use of various DX11 features to improve performance is something we’re going to see in more games than just Battleforge as additional titles pick up DX11, so this isn’t in any way an unusual use of DX11. Effectively anything can be done with existing pixel, vertex, and geometry shaders (we’ll skip the discussion of Turing completeness), just not at an appropriate speed. The fixed-function tessellater is faster than the geometry shader for tessellating objects, and in certain situations like ambient occlusion the compute shader is going to be faster than the pixel shader.

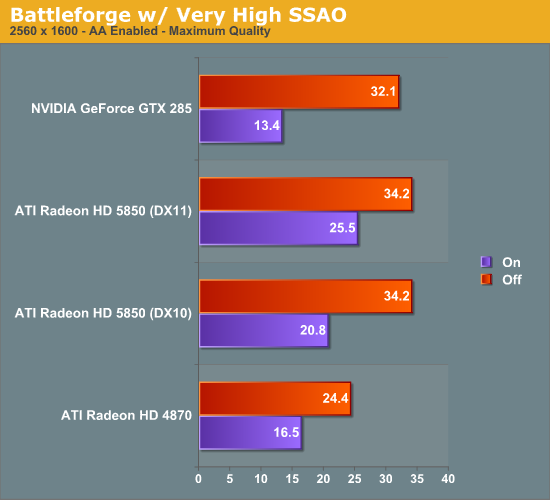

We ran Battleforge both with DX10/10.1 (pixel shader SSAO) and DX11 (compute shader SSAO) and with and without SSAO to look at the performance difference.

Update: We've finally identified the issue with our results. We've re-run the 5850, and now things make much more sense.

As Battleforge only uses the compute shader for SSAO, there is no difference in performance between DX11 and DX10.1 when we leave SSAO off. So the real magic here is when we enable SSAO, in this case we crank it up to Very High, which clobbers all the cards as a pixel shader.

The difference from in using a compute shader is that the performance hit of SSAO is significantly reduced. As a DX10.1 pixel shader it lobs off 35% of the performance of our 5850. But calculated using a compute shader, and that hit becomes 25%. Or to put it another way, switching from a DX10.1 pixel shader to a DX11 compute shader improved performance by 23% when using SSAO. This is what the DX11 compute shader will initially be making possible: allowing developers to go ahead and use effects that would be too slow on earlier hardware.

Our only big question at this point is whether a DX11 compute shader is really necessary here, or if a DX10/10.1 compute shader could do the job. We know there are some significant additional features available in the DX11 compute shader, but it's not at all clear on when they're necessary. In this case Battleforge is an AMD-sponsored showcase title, so take an appropriate quantity of salt when it comes to this matter - other titles may not produce similar results

At any rate, even with the lighter performance penalty from using the compute shader, 25% for SSAO is nothing to sneeze at. AMD’s press shot is one of the best case scenarios for the use of SSAO in Battleforge, and in the game it’s very hard to notice. For the 25% drop in performance, it’s hard to justify the slightly improved visuals.

95 Comments

View All Comments

MadMan007 - Wednesday, September 30, 2009 - link

Should have done HAWX in DX 10.1 mode then the HD5850 > GTX 285 sweep would have been complete. Or to flip it around, enable PhysX (lol) on some games.MadMan007 - Wednesday, September 30, 2009 - link

I have to point this out because it's something I've now seen on two websites and it irks me a little bit just like 'solid state capacitors' does. In the last sentence on page one the plural of die in this case is dies not dice. Someone didn't edit this carefully!Ryan Smith - Wednesday, September 30, 2009 - link

If you search our archives, Anand has discussed this in depth. It's dice.papapapapapapapababy - Wednesday, September 30, 2009 - link

btw, this card is powerless against "the way is meant to be played"nvidia keeps bribing developers left and right, ATI does nothing

(except boring sideshow penis wars), meanwhile the poor ATI users cant seem to play NFS SHIFT 640 x 480, all set to low, ( my -rebranded- 9800 does it great btw) + there is no in-game selective AA available to any ATI Radeon user in Batman, (another TWIMTBP game) + it looks like empty crap with all the shit nvidia - removed ( yes, really no smoke? no papers? no flags? not even static flags? what about GRAW? it used to work fine on reg cpus...)

papapapapapapapababy - Wednesday, September 30, 2009 - link

my "prototype" RV740 @ AC-S1 is better...

Core Clock: 875

Idle temp: 29C

Noise: 0db

TDP: 80W(+)

+ it doesn't look like the 60's Batmobile

AnnonymousCoward - Thursday, October 1, 2009 - link

What, like this one?http://student.dcu.ie/~lawlesc4/Batmobile%20%28TV%...">http://student.dcu.ie/~lawlesc4/Batmobile%20%28TV%...

ipay - Wednesday, September 30, 2009 - link

Except it doesn't hav DX11, so it's not better. Go troll somewhere else.strikeback03 - Wednesday, September 30, 2009 - link

I suppose we probably have to wait for the consumer driver release to know for sure, but how is the stability of these? The only two AMD cards I have direct experience with have both had driver issues, so that is the one factor that would keep me from considering one of the lower-powered versions of this architecture once they are released.Jamahl - Wednesday, September 30, 2009 - link

It is you who had the issue not the drivers. That is why millions of others have ati's without driver issues.strikeback03 - Wednesday, September 30, 2009 - link

Yeah, I definitely think closing my laptop lid and expecting it to have the same resolution set when I reopen is my fault *rolls eyes*.