NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

Architecting Fermi: More Than 2x GT200

NVIDIA keeps referring to Fermi as a brand new architecture, while calling GT200 (and RV870) bigger versions of their predecessors with a few added features. Marginalizing the efforts required to build any multi-billion transistor chip is just silly, to an extent all of these GPUs have been significantly redesigned.

At a high level, Fermi doesn't look much different than a bigger GT200. NVIDIA is committed to its scalar architecture for the foreseeable future. In fact, its one op per clock per core philosophy comes from a basic desire to execute single threaded programs as quickly as possible. Remember, these are compute and graphics chips. NVIDIA sees no benefit in building a 16-wide or 5-wide core as the basis of its architectures, although we may see a bit more flexibility at the core level in the future.

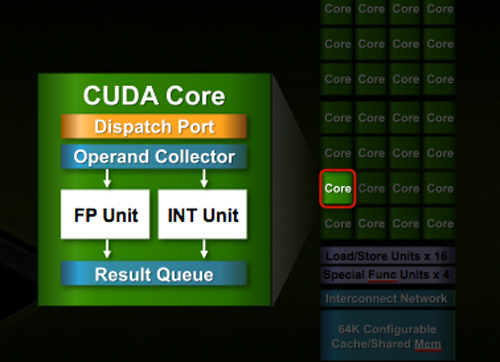

Despite the similarities, large parts of the architecture have evolved. The redesign happened at low as the core level. NVIDIA used to call these SPs (Streaming Processors), now they call them CUDA Cores, I’m going to call them cores.

All of the processing done at the core level is now to IEEE spec. That’s IEEE-754 2008 for floating point math (same as RV870/5870) and full 32-bit for integers. In the past 32-bit integer multiplies had to be emulated, the hardware could only do 24-bit integer muls. That silliness is now gone. Fused Multiply Add is also included. The goal was to avoid doing any cheesy tricks to implement math. Everything should be industry standards compliant and give you the results that you’d expect.

Double precision floating point (FP64) performance is improved tremendously. Peak 64-bit FP execution rate is now 1/2 of 32-bit FP, it used to be 1/8 (AMD's is 1/5). Wow.

NVIDIA isn’t disclosing clock speeds yet, so we don’t know exactly what that rate is yet.

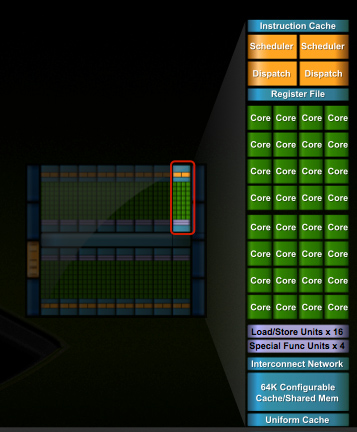

In G80 and GT200 NVIDIA grouped eight cores into what it called an SM. With Fermi, you get 32 cores per SM.

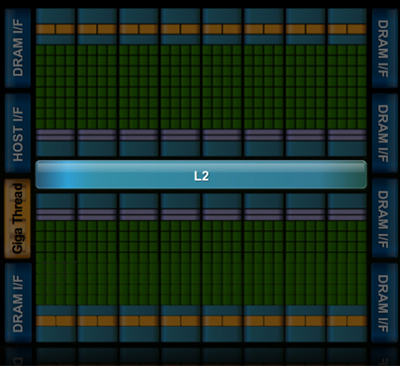

The high end single-GPU Fermi configuration will have 16 SMs. That’s fewer SMs than GT200, but more cores. 512 to be exact. Fermi has more than twice the core count of the GeForce GTX 285.

| Fermi | GT200 | G80 | |

| Cores | 512 | 240 | 128 |

| Memory Interface | 384-bit GDDR5 | 512-bit GDDR3 | 384-bit GDDR3 |

In addition to the cores, each SM has a Special Function Unit (SFU) used for transcendental math and interpolation. In GT200 this SFU had two pipelines, in Fermi it has four. While NVIDIA increased general math horsepower by 4x per SM, SFU resources only doubled.

The infamous missing MUL has been pulled out of the SFU, we shouldn’t have to quote peak single and dual-issue arithmetic rates any longer for NVIDIA GPUs.

NVIDIA organizes these SMs into TPCs, but the exact hierarchy isn’t being disclosed today. With the launch's Tesla focus we also don't know specific on ROPs, texture filtering or anything else related to 3D graphics. Boo.

A Real Cache Hierarchy

Each SM in GT200 had 16KB of shared memory that could be used by all of the cores. This wasn’t a cache, but rather software managed memory. The application would have to knowingly move data in and out of it. The benefit here is predictability, you always know if something is in shared memory because you put it there. The downside is it doesn’t work so well if the application isn’t very predictable.

Branch heavy applications and many of the general purpose compute applications that NVIDIA is going after need a real cache. So with Fermi at 40nm, NVIDIA gave them a real cache.

Attached to each SM is 64KB of configurable memory. It can be partitioned as 16KB/48KB or 48KB/16KB; one partition is shared memory, the other partition is an L1 cache. The 16KB minimum partition means that applications written for GT200 that require 16KB of shared memory will still work just fine on Fermi. If your app prefers shared memory, it gets 3x the space in Fermi. If your application could really benefit from a cache, Fermi now delivers that as well. GT200 did have an L1 texture cache (one per TPC), but the cache was mostly useless when the GPU ran in compute mode.

The entire chip shares a 768KB L2 cache. The result is a reduced penalty for doing an atomic memory op, Fermi is 5 - 20x faster here than GT200.

415 Comments

View All Comments

SiliconDoc - Thursday, October 1, 2009 - link

Well that's quite a compliment, thank you.Since you pretend to be a Kingslayer, I have to warn you, you have failed.

I am King, as you said, and in this case, your dullard's, idiotic attempt at another red raging rooster "silence job", has utterly failed.

Now, did you see that awesome closeup of TESLA ? You think that's carbon fiber at the bracket end ? Sure looks like it.

I wonder why ati only has like "red" "red" "red" "red" and "red" cards, don't you ?

I mean I never really thought about it before, but it IS LAME. It's like another cheapo low rent cost savings QUACK from the dollar undenominated lesser queendom of corner cutting, ati.

--

Gee, thank you for the added inspiration, as you well have noticed, the awful realities never mentioned keep coming to light.

At least one of us is actually thinking about videocards.

Not like you'll ever change that trend, so the King's Declaration is: EPIC FAIL !

tamalero - Thursday, October 1, 2009 - link

hu.. my 3870 from visiontek had black pbc :|SiliconDoc - Thursday, October 1, 2009 - link

Well that's actually kinda cool, but was the rest of it covered in that sick ati red ? That card is a heat monster with it's tiny core, so we know it was COVERED in fannage and plastic, unless it was a supercheap single slot that just blasted it into the case.BTW, in order to comment you've exposed another notch in your red fannage purchase history.

tamalero - Friday, October 2, 2009 - link

wait.. WHAT? o_Oyou dont make any sense.

silverblue - Thursday, October 1, 2009 - link

There's an nVidia card sat on a desk at work. It's got a red PCB.SiliconDoc - Thursday, October 1, 2009 - link

Yes, once again you made my point for me, and being so ignorant you failed to notice ! I mean do you guys do this on purpos, or are you just that stupid ?If you have a red nvidia card on the desk at work, that shows nvidia is flexible in color schemes, unlike 'red 'red' red red red red red rooster cards !

You do realize you made an immense BLUNDER now, don't you ?

You thought I meant the color RED was awful.

lol

Man you people just don't have sense above a tomato.

silverblue - Friday, October 2, 2009 - link

Just because the reference cards are red, doesn't mean the manufacturers have to make them so.In fact, ASUS released a 4890 with a black PCB.

You've now descended from arguing about the length of the card and the power of its GPU to the colour of the PCB. Considering it's under a damned cooling solution, how does this matter?

engineer1 - Thursday, October 1, 2009 - link

Anand hates Nvidia because they competed against his former lover AMD, and compete against his current lover Intel. Anand you are such a rotten spoiled brat. Since this website fell into your lap you could at least make an effort to act responsibly. Have you EVER held a job working for someone else? I doubt it. And you should ban the other spoiled brats who apparently work for Microsoft and spend about 8 hours a day dominating everything posted on Dailytech such as TheIdiotNickDanger and Evil666. Bunch of Cretians.gx80050 - Friday, October 2, 2009 - link

Die painfully okay? Prefearbly by getting crushed to death in a

garbage compactor, by getting your face cut to ribbons with a

pocketknife, your head cracked open with a baseball bat, your stomach

sliced open and your entrails spilled out, and your eyeballs ripped

out of their sockets. Fucking bitch

I would love to kick you hard in the face, breaking it. Then I'd cut

your stomach open with a chainsaw, exposing your intestines. Then I'd

cut your windpipe in two with a boxcutter.

Hopefully you'll get what's coming to you. Fucking bitch

I really hope that you get curb-stomped. It'd be hilarious to see you

begging for help, and then someone stomps on the back of your head,

leaving you to die in horrible, agonizing pain. Faggot

Shut the fuck up f aggot, before you get your face bashed in and cut

to ribbons, and your throat slit.

papapapapapapapababy - Thursday, October 1, 2009 - link

lol, look at the finger, is this shoped or what! there is no card FAKE!

http://img34.imageshack.us/img34/3594/fermi1.jpg">http://img34.imageshack.us/img34/3594/fermi1.jpg