NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

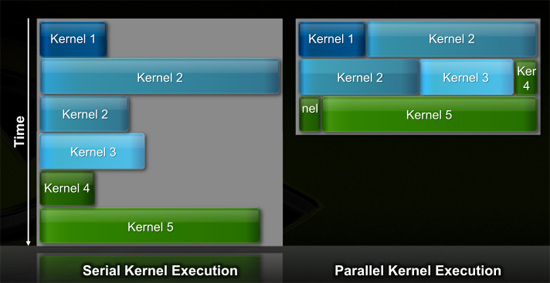

Efficiency Gets Another Boon: Parallel Kernel Support

In GPU programming, a kernel is the function or small program running across the GPU hardware. Kernels are parallel in nature and perform the same task(s) on a very large dataset.

Typically, companies like NVIDIA don't disclose their hardware limitations until a developer bumps into one of them. In GT200/G80, the entire chip could only be working on one kernel at a time.

When dealing with graphics this isn't usually a problem. There are millions of pixels to render. The problem is wider than the machine. But as you start to do more general purpose computing, not all kernels are going to be wide enough to fill the entire machine. If a single kernel couldn't fill every SM with threads/instructions, then those SMs just went idle. That's bad.

GT200 (left) vs. Fermi (right)

Fermi, once again, fixes this. Fermi's global dispatch logic can now issue multiple kernels in parallel to the entire system. At more than twice the size of GT200, the likelihood of idle SMs went up tremendously. NVIDIA needs to be able to dispatch multiple kernels in parallel to keep Fermi fed.

Application switch time (moving between GPU and CUDA mode) is also much faster on Fermi. NVIDIA says the transition is now 10x faster than GT200, and fast enough to be performed multiple times within a single frame. This is very important for implementing more elaborate GPU accelerated physics (or PhysX, great ;)…).

The connections to the outside world have also been improved. Fermi now supports parallel transfers to/from the CPU. Previously CPU->GPU and GPU->CPU transfers had to happen serially.

415 Comments

View All Comments

rennya - Thursday, October 1, 2009 - link

I have already countered your suggestion that ATI 5870 is just a paper launch, somewhere in this same discussion.Plus, if nVidia really has working silicon as you showed in the fudzilla link, where then I can buy it? Even at the IDF, Intel shows working silicon for Larrabee (although older version), but not even the die-hard Intel fanboys will claim that Larrabee will be available soon.

SiliconDoc - Thursday, October 1, 2009 - link

Gee, we have several phrases. Hard launch, and paper launch. Would you prefer something in between like soft launch ?Last time nvidia had a paper launch, that what everyone called it and noone had a problem, even if cards trickled out.

So now, we need changed definitions and new wordings, for the raging little lying red roosters.

I won't be agreeing with you, nor have you done anything but lie, and attack, and act like a 3 year old.

It's paper launch, cards were not in the channels on the day they claimed they were available. 99 out of 100 people were left bone dry, hanging.

Early today the 5850 was listed, but not avaialable.

Now, since you people and this very site taught me your standards and the definition and how to use it, we're sticking to it when it's a god for saken red rooster card, wether you like it or not.

rennya - Friday, October 2, 2009 - link

You defined hard launch as having cards on retail shelf.That's what happened here in the first couple of days at the place I lived in. So, according to your standard, 5870 is a hard launch, not paper launch or soft launch. I can easily get one if I want to (but my casing is just crappy TECOM mid-tower, the card will not fit).

As far as I am concerned, 5870 has a successful hard launch. You tried to tell people otherwise, that's why I called you a liar.

Where to know where I live? Open up the Lynnfield review at http://www.anandtech.com/cpuchipsets/showdoc.aspx?...">http://www.anandtech.com/cpuchipsets/showdoc.aspx?... then look at the first picture in the first page. It shows you the country where I am posting this post from. The same info can also be seen in AMD Athlon X4 620 review at http://www.anandtech.com/cpuchipsets/showdoc.aspx?...">http://www.anandtech.com/cpuchipsets/showdoc.aspx?... . The markup from MRSP can be ridiculous sometimes, but availability is not a problem.

Zingam - Thursday, October 1, 2009 - link

This CPU is for what? Oh, Tesla - the things that cost 2000 :) And the consumers won't really get anything more by what ATI offers currently!Seems like it is time for ATI to do a paper launch.

Just to inform the fanboys: ATI has already finalized the specs for generation 900 and 1000. The current is just 800.

So on paper, dudes, ATI has even more than what they are displaying now!

BTW who said: DX11 won't matter?? :)

cactusdog - Thursday, October 1, 2009 - link

Its unbelievable that Nvidia wont have a DX11 chip in 2009. Massive fail.strikeback03 - Thursday, October 1, 2009 - link

Not if there are no worthwhile DX11 games in 2009.yacoub - Wednesday, September 30, 2009 - link

"Perhaps Fermi will be different and it'll scale down to $199 and $299 price points with little effort? It seems doubtful, but we'll find out next year."Yeah okay, side with their marketing folks. God forbid they actually release reasonably-priced versions of Fermi that people will actually care to buy.

SiliconDoc - Thursday, October 1, 2009 - link

Derek did an article not long ago on the costs of a modern videocard, and broke it down part and piece by cost, each.Was well done and appeared accurate, and the "margin" for the big two was quite small. Tiny really.

So people have to realize fancy new tech costs money, and they aren't getting raked over the coals. its just plain expensive to have a shot to the moon, or to have the latest greatest gpu's.

Back in '96 when I sold a brand new computer I doubled my investment, and those days are long gone, unles you sell to schools or the government, then the upside can be even higher.

yacoub - Thursday, October 1, 2009 - link

And yet all of that is irrelevant if the product cannot be delivered at a price point where most of the potential customers will buy it. You're forgetting that R&D costs are not just "whatever they will be" but are based off what the market will support via purchasing the end result. It all starts withe the consumer. You can argue all you want that Joe Gamer should buy a $400 GPU but he's only capable of buying a $300 GPU and only willing to buy a $250 GPU, then you're not going to get a sale until you cross the $300 threshold with amazing marketing and performance or the $250 threshold with solid marketing and performance. Companies go bust because they overspend on R&D and never recoup the cost because they can't price the product properly for it to sell the quantities needed to pay back the initial investment, let alone turn a significant profit.Arguing that gamers should just magically spend more is silly and shows a lack of understanding of economics.

SiliconDoc - Thursday, October 1, 2009 - link

Well I didn't argue that gamers should magically spend more.--

I ARGUED THAT THE VIDEOCARDS ARE NOT SCALPING THE BUYERS.

---

Deerek's article, if you had checked, outlined a card barely over $100.00

But, you instead of thinking, or even comprehending, made a giant leap of false assuming. So let me comment on your statements, and we'll see where we agree.

--

1. Ok, the irreverance(yes that word) here is that TESLA commands a high price, and certainly has been stated to be the profit margin center (GT200) for NVIDIA- so...whatever...

- Your basic points are purely obvious common sense, one doesn't even need state them - BUT - since Nvidia has a profit driver where ATI does not, if you're a joe rouge, admitting that shouldn't be the crushing blow it apparently is.

2. Since Nvidia has been making the MONEY, the PROFIT, and reinvesting, I think they have a handle on what to spend, not you, and their sales are much higher up to recoup costs, not sales, to you, your type.

----

Stating the very simpleton points of even having a lemonade stand work out doesn't impress me, nor should you have wasted your time doing it.

Now, let's recap my point: " So people have to realize fancy new tech costs money, and they aren't getting raked over the coals."

--

That's a direct quote.

I also will reobject your own stupidty : " You're forgetting that R&D costs are not just "whatever they will be" but are based off what the market will support via purchasing the end result."

PLEASE SEE TESLA PRICES.

--

Another jerkoff that is SOOOOOOOO stupid, finds it possible that someone would even argue that gamers should just spend more money on a card, and - after pointing out that's ridiculous, feels he has made a good point, and claims, an understanding of economics.

-

If you don't see the hilarity in that, I'm not sure you're alive.

-

Where do we get these brilliant analysts who state the obvious a high schooler has had under their belt for quite some time ?

--

I will say this much - YOU specifically (it seems), have encountered in the help forums, the arrogant know it all blowhards, who upon say, encountering a person with a P4 HT at 3.0GHZ, and a pci-e 1.0 x16 slot, scream in horror as the fella asks about placing a 4890 in it. The first thing out of their pieholes is UPGRADE THE CPU, the board, the ram, then get a card....

If that is the kind of thing you really meant to discuss, I'd have to say I'm likely much further down that bash road than you.

You might be the schmuck that shrieks "cpu limitation!, and recommends the full monty reaplcements"

--

Let's hope you're not after that simpleton quack you had at me.