NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

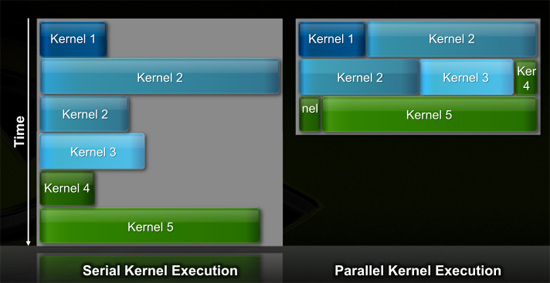

Efficiency Gets Another Boon: Parallel Kernel Support

In GPU programming, a kernel is the function or small program running across the GPU hardware. Kernels are parallel in nature and perform the same task(s) on a very large dataset.

Typically, companies like NVIDIA don't disclose their hardware limitations until a developer bumps into one of them. In GT200/G80, the entire chip could only be working on one kernel at a time.

When dealing with graphics this isn't usually a problem. There are millions of pixels to render. The problem is wider than the machine. But as you start to do more general purpose computing, not all kernels are going to be wide enough to fill the entire machine. If a single kernel couldn't fill every SM with threads/instructions, then those SMs just went idle. That's bad.

GT200 (left) vs. Fermi (right)

Fermi, once again, fixes this. Fermi's global dispatch logic can now issue multiple kernels in parallel to the entire system. At more than twice the size of GT200, the likelihood of idle SMs went up tremendously. NVIDIA needs to be able to dispatch multiple kernels in parallel to keep Fermi fed.

Application switch time (moving between GPU and CUDA mode) is also much faster on Fermi. NVIDIA says the transition is now 10x faster than GT200, and fast enough to be performed multiple times within a single frame. This is very important for implementing more elaborate GPU accelerated physics (or PhysX, great ;)…).

The connections to the outside world have also been improved. Fermi now supports parallel transfers to/from the CPU. Previously CPU->GPU and GPU->CPU transfers had to happen serially.

415 Comments

View All Comments

Zingam - Thursday, October 1, 2009 - link

Perhaps next gen consoles would be C++ based and not API based (what a great terminology). So in that sense DirectX won't matter like it won't matter on Larrabee because it will be emulated in software. Larrabee would not have any silicon dedicated to OpenGL or DirectX I think.Once GPUs get fast enough I guess they won;t be called Graphics processors anymore and will support APIs as software implementations.

marc1000 - Thursday, October 1, 2009 - link

well, perhaps... I was searching the web yesterday for info on the new consoles, it was kinda sad. if we do not get a new minimun-standard (a powerful console), then the PC games will not be that hard to run on PCs... then my old Radeon 3850 is still capable of running almost ALL games with "good enough" permformance (read: average 30-50fps on most of the console ports to PC).and so: no reason to upgrade! :-(

Dobs - Wednesday, September 30, 2009 - link

Personally I think Eyefinity will be remembered as the master stroke as well as first to implement DirectX 11. Nvidia may get 10 fps more in DirectX 11 in Q2 2010 but will still struggle until it has it's own version of Eyefinity.Current uber-cool for the cashed-up is Eyefinity and once you have 3 or 6 monitors you will only buy hardware that will support it. These 'cashed-up' PC gamers are usually Nvidia's favorite customers.

Nvidia needs flexible wrap-around OLED mega resolution monitors to come out yesterday, but I'm pretty sure that didn't happen... 5850 which supports 3 monitors came out yesterday. :P

SiliconDoc - Thursday, October 1, 2009 - link

Nvidia has supported FOUR monitors on say for instance, the 570i sli, for like YEARS dude.Just put in 2 nv cards, plug em up - and off you go, it's right in the motherboard manuals...

Heck you can plug in two ati cards for that matter.

---

Anyway on the triple monitor with this 5870/50, the drivers are a mess, some having found the patch won't be for a month, then the extra $100 cable is needed, too, as some have mentioned, that ati has not included.

They're pissed.

Dobs - Thursday, October 1, 2009 - link

I'll be avoiding the extra $100 cable by getting DisplayPort monitors from the start. Also want to get ips monitor (i think) so that it will support the portrait mode.If I already had 3 non-DisplayPort monitors, I wouldn't mind shelling out for the DisplayPort adapter if that was my only expense. But if the adapter was flakey I'd be upset as well. I know multi-monitors have been around for years, but they've never been this easy to set-up... Even I could do it :P And the drivers will get better in time, and no doubt future games will look to include Eyefinity as well.

SiliconDoc - Thursday, October 1, 2009 - link

Well I do hope you have good luck and that you and your son enjoy it ( no doubt will if you manage get it), and it would be nice if you can eventually link a pic (likely in some future article text area) just because.I think eyefinity has an inherent advantage, cheaper motherboard possible, 3 on one card, and with 3 from the same card, you can have the concave wrap view going with easy setup.

I agree however with the comment that it won't be a widely used feature, and realize most don't even use both monitor hookups on their videocards that are already available as standard, since long ago, say the 9600se and before.

(I use two though, and I have to say it is a huge difference, and much, much better than one)

yacoub - Wednesday, September 30, 2009 - link

Wake up: 99% of people don't give a crap about Eyefinity. Not only do the VAST, VAST majority of customers have just one display, but those who do have multiple ones (like myself) often have completely different displays, not multiple of the same model and size. And then, even when you find that 0.1% of the customer base that has two or more identical monitors side-by-side, you have to find the ones who game on them. Then of those people, find the ones who actually WANT to have their game screen split across two monitors with a thick line of two display borders right in the middle of their image.Eyefinity is relevant to such an infinitesimally small number of people it is laughable every time someone mentions it like it's some sort of "killer app" feature.

Jamahl - Thursday, October 1, 2009 - link

thats why eyefinity has millions of youtube views already right.yacoub - Thursday, October 1, 2009 - link

because purchases are measured in YouTube views. wow, just... wow.ClownPuncher - Thursday, October 1, 2009 - link

It clearly means people are interested enough to look. Wow, just...wow.You don't like it, other people do. Get over it.