NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

Architecting Fermi: More Than 2x GT200

NVIDIA keeps referring to Fermi as a brand new architecture, while calling GT200 (and RV870) bigger versions of their predecessors with a few added features. Marginalizing the efforts required to build any multi-billion transistor chip is just silly, to an extent all of these GPUs have been significantly redesigned.

At a high level, Fermi doesn't look much different than a bigger GT200. NVIDIA is committed to its scalar architecture for the foreseeable future. In fact, its one op per clock per core philosophy comes from a basic desire to execute single threaded programs as quickly as possible. Remember, these are compute and graphics chips. NVIDIA sees no benefit in building a 16-wide or 5-wide core as the basis of its architectures, although we may see a bit more flexibility at the core level in the future.

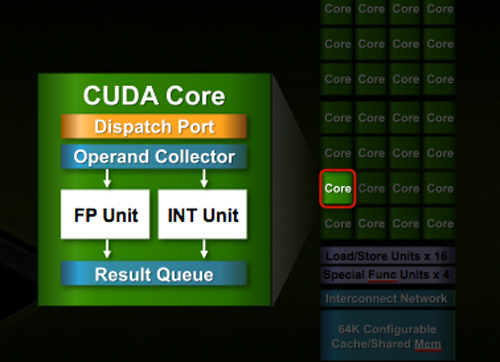

Despite the similarities, large parts of the architecture have evolved. The redesign happened at low as the core level. NVIDIA used to call these SPs (Streaming Processors), now they call them CUDA Cores, I’m going to call them cores.

All of the processing done at the core level is now to IEEE spec. That’s IEEE-754 2008 for floating point math (same as RV870/5870) and full 32-bit for integers. In the past 32-bit integer multiplies had to be emulated, the hardware could only do 24-bit integer muls. That silliness is now gone. Fused Multiply Add is also included. The goal was to avoid doing any cheesy tricks to implement math. Everything should be industry standards compliant and give you the results that you’d expect.

Double precision floating point (FP64) performance is improved tremendously. Peak 64-bit FP execution rate is now 1/2 of 32-bit FP, it used to be 1/8 (AMD's is 1/5). Wow.

NVIDIA isn’t disclosing clock speeds yet, so we don’t know exactly what that rate is yet.

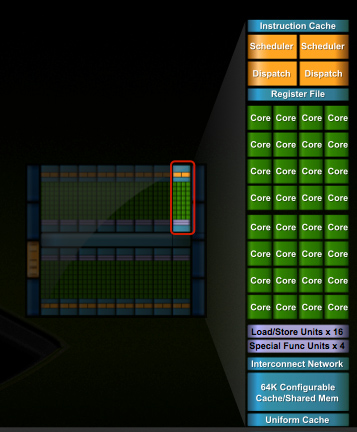

In G80 and GT200 NVIDIA grouped eight cores into what it called an SM. With Fermi, you get 32 cores per SM.

The high end single-GPU Fermi configuration will have 16 SMs. That’s fewer SMs than GT200, but more cores. 512 to be exact. Fermi has more than twice the core count of the GeForce GTX 285.

| Fermi | GT200 | G80 | |

| Cores | 512 | 240 | 128 |

| Memory Interface | 384-bit GDDR5 | 512-bit GDDR3 | 384-bit GDDR3 |

In addition to the cores, each SM has a Special Function Unit (SFU) used for transcendental math and interpolation. In GT200 this SFU had two pipelines, in Fermi it has four. While NVIDIA increased general math horsepower by 4x per SM, SFU resources only doubled.

The infamous missing MUL has been pulled out of the SFU, we shouldn’t have to quote peak single and dual-issue arithmetic rates any longer for NVIDIA GPUs.

NVIDIA organizes these SMs into TPCs, but the exact hierarchy isn’t being disclosed today. With the launch's Tesla focus we also don't know specific on ROPs, texture filtering or anything else related to 3D graphics. Boo.

A Real Cache Hierarchy

Each SM in GT200 had 16KB of shared memory that could be used by all of the cores. This wasn’t a cache, but rather software managed memory. The application would have to knowingly move data in and out of it. The benefit here is predictability, you always know if something is in shared memory because you put it there. The downside is it doesn’t work so well if the application isn’t very predictable.

Branch heavy applications and many of the general purpose compute applications that NVIDIA is going after need a real cache. So with Fermi at 40nm, NVIDIA gave them a real cache.

Attached to each SM is 64KB of configurable memory. It can be partitioned as 16KB/48KB or 48KB/16KB; one partition is shared memory, the other partition is an L1 cache. The 16KB minimum partition means that applications written for GT200 that require 16KB of shared memory will still work just fine on Fermi. If your app prefers shared memory, it gets 3x the space in Fermi. If your application could really benefit from a cache, Fermi now delivers that as well. GT200 did have an L1 texture cache (one per TPC), but the cache was mostly useless when the GPU ran in compute mode.

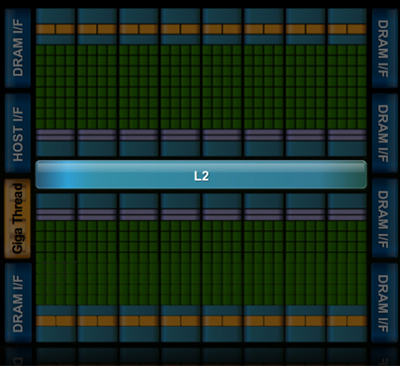

The entire chip shares a 768KB L2 cache. The result is a reduced penalty for doing an atomic memory op, Fermi is 5 - 20x faster here than GT200.

415 Comments

View All Comments

Griswold - Wednesday, September 30, 2009 - link

Well, you have to consider that nvidia is getting between a rock and a hard place. The PC gaming market is shrinking. Theres not much point in making desktop chipsets anymore... they have to shift focus (and I'm sure they will focus) on new things like GPGPU. I wont be surprised if GT300 wont be a the super awesome gamer GPU of choice so many people expect it to be. And perhaps, the one after GT300 will be even less impressive for gaming, regardless of what they just said about making humongous chips for the high-end segment.SiliconDoc - Wednesday, September 30, 2009 - link

Gee nvidia is between a rock and a hard place, since they have an OUT, and ATI DOES NOT.lol

That was a GREAT JOB focusing on the wrong player who is between a rock and a hard place, and that player would be RED ROOSTER ATI !

--

no chipsets

no chance at TESLA sales in the billions to coleges and government and schools and research centers all ove the world....

--

buh bye ATI ! < what you should have actually "speculated"

...

But then, we know who you are and what you're about -

TELLING THE EXACT OPPSITE OF THE TRUTH, ALL FOR YOUR RED GOD, ATI !

--

silverblue - Thursday, October 1, 2009 - link

When nVidia actually sends out Fermi samples for previews/reviews, only then will you know how good it is. We all want to see it because we want competition and lower prices (and maybe some of us will buy one or more, as well!).Until then, keep your fanboy comments to yourself.

SiliconDoc - Thursday, October 1, 2009 - link

No silverblue, that is in fact your problem, not mine, as you won't know anything, till you're shown a lie or otherwise, and it's shoved into your tiny processor for your personal acceptance.The fact remains, red fanboy raver Griswold blew it, and I pointed out exactly WHY.

The fact that you cry about it, because you group stupid dummies keep blowing nearly every statement you make, sure isn't my fault.

silverblue - Thursday, October 1, 2009 - link

I wonder if you do actually read posts before you reply to them.SiliconDoc - Thursday, October 1, 2009 - link

Take your own advice, you pathetic hypocrit.ClownPuncher - Thursday, October 1, 2009 - link

Its actually "hypocrite".SiliconDoc - Friday, October 2, 2009 - link

It's "it's", you pathetic hypocrit.silverblue - Friday, October 2, 2009 - link

It's "hypocrite", you pathetic hypocrite.chizow - Wednesday, September 30, 2009 - link

Nvidia is simply hedging their bets and expanding their horizons. They've still managed to offer the fastest GPUs per product cycle/generation and they're clearly far more advanced than AMD when it comes to GPGPU in both theory and practice.Jensen's keynote tipped his hat numerous times to Nvidia's roots as a GPU company that designed chips to run 3D video games, but the focus of his presentation was clearly to sell it as more than that, as a cGPU capable of incredible computational ability.