NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

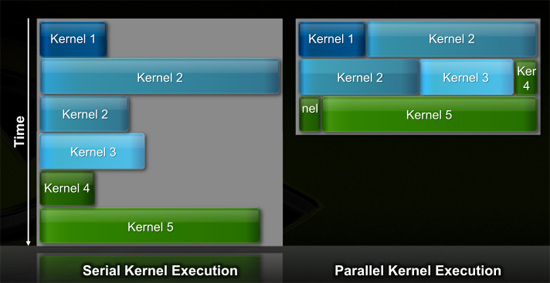

Efficiency Gets Another Boon: Parallel Kernel Support

In GPU programming, a kernel is the function or small program running across the GPU hardware. Kernels are parallel in nature and perform the same task(s) on a very large dataset.

Typically, companies like NVIDIA don't disclose their hardware limitations until a developer bumps into one of them. In GT200/G80, the entire chip could only be working on one kernel at a time.

When dealing with graphics this isn't usually a problem. There are millions of pixels to render. The problem is wider than the machine. But as you start to do more general purpose computing, not all kernels are going to be wide enough to fill the entire machine. If a single kernel couldn't fill every SM with threads/instructions, then those SMs just went idle. That's bad.

GT200 (left) vs. Fermi (right)

Fermi, once again, fixes this. Fermi's global dispatch logic can now issue multiple kernels in parallel to the entire system. At more than twice the size of GT200, the likelihood of idle SMs went up tremendously. NVIDIA needs to be able to dispatch multiple kernels in parallel to keep Fermi fed.

Application switch time (moving between GPU and CUDA mode) is also much faster on Fermi. NVIDIA says the transition is now 10x faster than GT200, and fast enough to be performed multiple times within a single frame. This is very important for implementing more elaborate GPU accelerated physics (or PhysX, great ;)…).

The connections to the outside world have also been improved. Fermi now supports parallel transfers to/from the CPU. Previously CPU->GPU and GPU->CPU transfers had to happen serially.

415 Comments

View All Comments

LawRecordings - Thursday, October 1, 2009 - link

Buwahahaha!!!What a sad, lonely little life this Silicon Doc must lead. I struggle to see how this guy can have any friends, not to mention a significant other. Or even people that can stand being in a room with him for long. Prolly the stereotypical fat boy in his mom's basement.

Careful SD, the "red roosters" are out to get you! Its all a conspiracy to overthrow the universe, and you're the only one that knows!

Great article Anand, as always.

Regards,

Law

Vendor agnostic buyer of the best price / performance GPU at the time

SiliconDoc - Thursday, October 1, 2009 - link

They can't get me, they've gotten themselves, and I've just mashed their face in it.And you're stupid enough to only be able to repeat the more than thousandth time repeated internet insult cleche's, and by your ignorant post, it appears you are an audiophile who sits bouncing around like a retard with headphones on, before after and during getting cooked on some weird dope, a HouseHead, right ? And that of course does not mean a family, doper.

So you giggle like a little girl and repeat what you read since that's all the stoned gourd can muster, then you kiss that rear nice and tight, brown nose.

Don't forget your personal claim to utter innocence, either, mr unbiased.

LOL

Yep there we have it, a househead music doused doped up butt kisser with a lame cleche'd brain and a giggly girl tude.

Golly, what were you saying about wifeless and friendless ?

ClownPuncher - Thursday, October 1, 2009 - link

What exactly is a cleche?Is it anything like a cliche?

Your spelling, grammar, and general lack of communication skill lead me to think that you are actually a double agent, it's an act if you will...an ATI guy posing as a really socially stunted Nvidia fan in an attempt to turn people off of Nvidia products solely by the ineptitude of your rhetoric.

UNCjigga - Wednesday, September 30, 2009 - link

I'd hate to have a political conversation with SiliconDoc, but I digress...Some very interesting information came out in today's previews. Will Fermi be a bigger chip than Cypress? Certainly. Will it be more *powerful* than Cypress? Possibly. Will it be more expensive than Cypress? Probably. Will it have more memory bandwidth than Cypress? Yes.

Will it *play games* better than Cypress? Remains to be seen. Too many factors at play here. We don't know clock speeds. We have no idea if "midrange" Fermi cards will retain the 384-bit memory interface. We have

For all we know, all of Fermi's optimizations will mean great things for OpenCL and DirectCompute, but how many *games* make use of these APIs today? How can we compare DirectX 11 performance with few games and no Fermi silicon available for testing? Most of the people here will care about game performance, not Tesla or GPGPU. Hell, its been years since CUDA and Stream arrived and I'm still waiting for a decent video encoding/transcoding solution.

Calin - Thursday, October 1, 2009 - link

Even between current cards (NVIDIA and AMD/ATI) the performance crown moves from one game to another - one card could do very well in one game and much worse in another (compared to the competition). As for not yet released cards, performance numbers in games can only be divined, not predictedBull Dog - Wednesday, September 30, 2009 - link

So how much in NVIDIA's focus group partner paying you to post this stuff?dzoni2k2 - Wednesday, September 30, 2009 - link

You seriously need to take your medicine. And call your shrink.dragonsqrrl - Thursday, October 1, 2009 - link

I know it seems like SiliconDoc is going on a ranting rage, because he kinda is, but the fact remains that this was a fairly biased article on the part of Anandtech. I've been reading reviews and articles here for a long time, and recently there has been a certain level of prejudice against Nvidia and its products that I haven't noticed on other legitimate review cites. This seems to have been the result of Anandtech getting left out of the loop last year. Throughout the article there is a pretty obvious sarcastic undertone towards what the Nvidia representatives say, and their newly announced GPU. I can only hope that this stops, so that anandtech can return to its former days of relatively balanced and fair reporting, which is all anyone can ask of any legitimate review cite. Articles of this manner and tone serve no purpose but to enrage people like SiliconDoc, and hurt Anandtech's image and reputation as a balanced a legitimate tech cite.Keeir - Thursday, October 1, 2009 - link

Curious in where you see the Bias.I see a little bit of the tone, but it seems warranted for a company that has for the last few years over-promised and under delivered. Very similar to how AMD/ATI was treated upto the release of the 4 series. Nvidia needs to prove (again) that it can deliever a real innovative product priced at an affordable level for the core audience of graphics cards.

Here we are, 7 days after 5870 launch and Egg has 5870s for ~375 to GTX 295s at 500. Yet again, ATI/AMD has made it a puzzling choice to buy any Nvida product more than 200 dollars.... for months at a time.

SiliconDoc - Friday, October 2, 2009 - link

What's puzzling is you are so out of touch, you don't realize the GTX295's were $406 before ati launched it's epic failure, then the gtx295 rose to $469 and the 5870 author edsxplained in text the pre launch price, and now you say the GTX295 is at $500.Clearly, the market has decided the 5870 is epic failure, and instead of bringing down the GTX295, it has increased it's value !

ROFLMAO

Awwww, the poor ati failure card drove up the price of the GTX295.

Awww, poor little red roosters, sorry I had to explain it to you, it's better if you tell yourself some imaginary delusion and spew it everywhere.