Quad SLI with 9800 GX2: Pushing a System to its Limit

by Derek Wilson on March 25, 2008 9:00 AM EST- Posted in

- GPUs

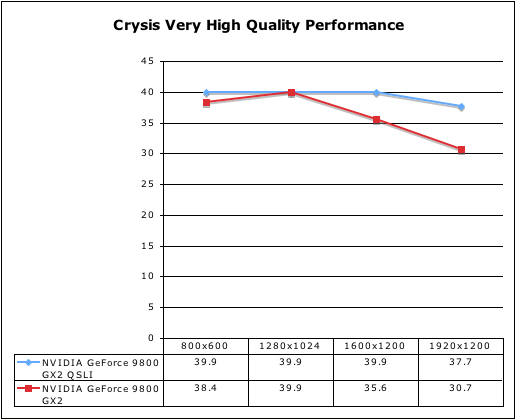

What about Crysis on Very High?

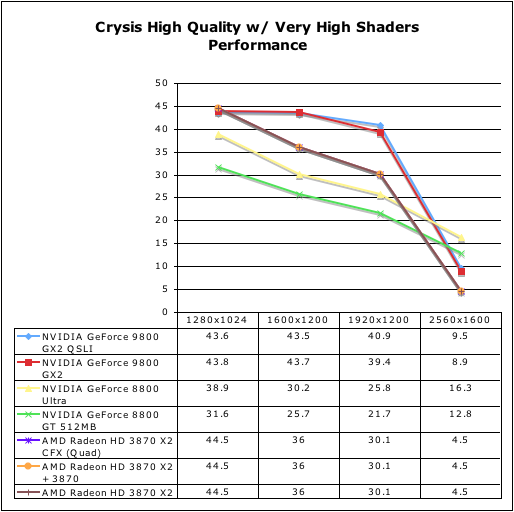

So we tested Crysis on Very high settings with our single 9800 GX2 and Quad setup. Here’s what we got:

As we can see, with very high quality, performance starts to diverge between the dual and quad GPU NVIDIA solutions. It’s also interesting to note that performance doesn’t drop a great deal when moving up in quality. This indicates that the 9800 GX2 is still system bound in some way. And oddly enough, it looks like it is more system bound at the higher quality setting.

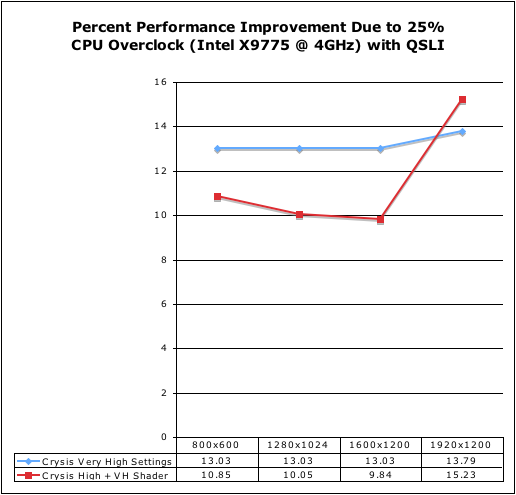

To test this absurd theory, we decided to see what happened when we overclocked our 8 CPUs from 3.2 to 4GHz. Let’s test the theory that we are CPU bound to about 45-50 fps at high quality and 40 fps at very high quality. Here is what our 25% overclock netted us when we tested with both very high and high quality using the 9800 GX2 in Quad SLI.

I’ll give you all a second to pick your jaws up off the floor....

Ok, break’s over. We see more than a 50% performance improvement per percent increase in CPU clock speed; a 25% clock speed increase netted us more than half that in real performance under Very High Quality settings. We saw less than 50% improvement at lower res for High Quality plus Very High Shaders until we hit 1920x1200, which netted us a 15% gain on a 25% increase in clock speed.

This indicates that the higher the graphical quality, the MORE CPU bound we are. Crazy isn’t it? It's counter-intuitive, but pure fact. In speaking with NVIDIA about this (they have helped us a lot in understanding some of our issues here), the belief is that more accurate and higher quality physics at higher graphical quality settings is what causes this overhead. Also, keep in mind that we are testing in a timedemo with AI disabled.

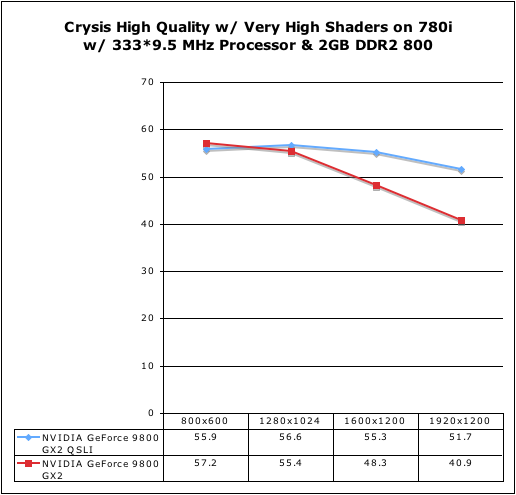

And that’s not where it ends. We are platform bound as well. Yes, I said platform bound. A quick check on 780i returned these results:

Not that we are still CPU bound here even though performance is about 25% higher than on Skulltrail (we benefit even more from a platform change than from an overclock). This is with the same speed CPU. It’s quite unfortunate that we stumbled across all of this last night with the help of NVIDIA while trying to troubleshoot our issues. Remember we said that NVIDIA expects a 60% improvement in Crysis at 19x12 with Very High Quality settings. The added number of cores, the fact that I’m only able to run two FB-DIMMS at the moment (for half of the system memory bandwidth I should have), the arrangement of the PCIe lanes on Skulltrail … All of this contributes to our system limitation here and our inability to see scaling from 9800 GX2.

Now, we have seen better performance on Skulltrail in the past, so it is unclear if there is something we can do to remove some of this system limitation at this point. We will certainly be exploring this further as we would still like to make a single platform work for all of our graphics testing. If we can’t then we’ll move on, but it is useful to discover if this is an Intel issue, an OS issue, a driver issue, or something else. If it’s fixable we need to find out how to fix it, as there are probably two or three people out there who’ve purchased a D5400XS board and will not be happy if it performs much worse than 780i boards in cases like this. My working theory right now is that I applied some hotfix or changed some seemingly benign OS setting that caused some problem somewhere. But like I said, we’ll track it down.

54 Comments

View All Comments

iceveiled - Tuesday, March 25, 2008 - link

I understand crysis is a good game to test the muscle power of video cards, but if anybody out there hasn't played the game yet and wants the best setup for it, please don't spend $1200 in video cards. I've played through half life 2 numerous times, call of duty 4 numerous times, and crysis only once. Once you get over the wow factor of the graphics, it's not that amazing of an experience....mark3450 - Tuesday, March 25, 2008 - link

In this and the last article on the 9800GX2 the following benchmarking data on Crysis @2560x1600 has shown up in a chart.9800GX2 - 8.9FPS

8800Ultra - 16.3FPS

8800GT - 12.3FPS

Now I look at this and I say the FPS scaling you get by adding a second card is generally around 50% to 60% in the best case scenario. If we assume that, then 2x 8800Ultra would be getting around 25FPS, which is getting into playable range especially with the motion blur that Crysis uses. Obviously this is assuming decent scaling, but this data just screams give it a try.

On a slightly realated note, I also see that the same Crysis chart shows that two cards scale roughly linearly with resolution up to to 2560x1600 (8800GT and 8800Ultra) while the others show a sharp drop at 2560x1600 (9800GX2, all AMD cards). This makes me ask the question what's different about these two groups of cards. One common feature I note is that the cards that scale linearly are all using PCIe 2.0, while the ones that have a sharp drop off @2560x1600 are using PCIe 1.x (the 9800GX2 is externally PCIe 2.0, but internally the two cards are connected via PCIe 1.x). Mabey it has nothing to do with the type of PCIe connection, but it certainly correlates.

Basically all this makes me think that for gaming at 2560x1600 I'm likely to be better off with two 8800Ultra's (or even 8800 GTX's) than I am with one or even two 9800GX2's (and since I and a lot of people interested gaming on high end rigs at 2560x1600 likely have a 8800GTX/Ultra already it would be far cheaper as well). This is of course all speculation since there are no reported benchmarks for 8800GTX/Ultra in SLI mode in these comparisons, which is why I like to request them. :)

-Mark

mark3450 - Tuesday, March 25, 2008 - link

Turns out hardocp has a review up athttp://enthusiast.hardocp.com/article.html?art=MTQ...">http://enthusiast.hardocp.com/article.html?art=MTQ...

(can't insert a proper link for some reason)

that compares 2x 9800GX2 with 2x 8800GTX's. The short summary is 2x 8800GTX's are better than 2x 9800GX2 at hi-res gaming. The 9800GX2's often have higher average frame rates than the 8800GTX's, but the 8800GTX's have much more consistent frame rates (the 9800GX2's often had there frame rates crash to unacceptable levels for short periods of time, whereas the 8800GTX were playable throughout).

Essentially it looks like I am better off getting a second 8800GTX rather than 1 or 2 9800GX2's for gaming at 2560x1600, and it's way cheaper to boot.

I will still wait till next week to see how the 9800GTX performs, but given the leaked info on it and recent history of anemic releases by NVIDIA I'm not holding out much hope for the 9800GTX.

-Mrk

zshift - Tuesday, March 25, 2008 - link

in the second paragraph you noted the skulltrail as having 2x lga775 sockets, but i'm pretty sure it has lga771 sockets only. if i'm mistaken, i apologize, if i'm right, please correct the error so other less knowledgeable readers don't receive false information.Tilmitt - Tuesday, March 25, 2008 - link

You guys shouldn't be using Skulltrail to benchmark games. It's not a gaming platform. Most games run slower on it than a single socket quad core systems due to the FB DIMMs. It provides a sub-optimal environment for both SLI and crossfire which negates any value that it might have for levelling the playing field there. I think the author is letting his personal desire to use the Skulltrail system get in the way of doing a proper review. The fact of the matter is that Skulltrail is slow for games and doesn't reflect how the vast majority of people would run their SLI and crossfire setups.As to the multi-GPUness, I think you'd have to be mad to buy them given the price and horrendous scaling. As always, the generation cards will mostly outperform a multi-GPU systems at less cost, less power consumption and more consistent performance across all games.

tynopik - Tuesday, March 25, 2008 - link

faint of heart, not feint ;)cactusjack - Tuesday, March 25, 2008 - link

This is my point. The testers here had "some problems" and these guys are very experienced and tecnically savy. They also have access to alot of PSU's ram etc etc to try if things dont work right. If it were a car or a television it would be sent back as what it is, a failure, and a lemon. Why do we accept it with PC parts.?Inkjammer - Tuesday, March 25, 2008 - link

Case in point, I have the 9800 GX2:* I can not run multiple monitors with SLI enabled. So I have to swap between my 24" monitor and my Wacom Cintiq 21". When I change over, the drivers won't auto-detect the resolution, and uses resolutions and hertz the Wacom doesn't support, and I get an "out of signal" error. I have to disable SLI to use my $2,500 art tablet as a secondary monitor.

* I'm a graphic designer, and I can't take screenshots anymore without them coming out garbled like this:

http://www.inkjammer.com/broken_screencaps.jpg">http://www.inkjammer.com/broken_screencaps.jpg

I could find workarounds, get a screen cap program or just disable SLI, but this is all basic functionality gone bad.

There are a LOT of little problems that could impede testing without being visible. The fact that SLI breaks basic functions like multi-monitor setups and screen capture in Vista is puzzling. These drivers feel like betas lacking basic functionality. If I even try Crysis with 8X FSAA my entire system crashes.

dare2savefreedom - Tuesday, March 25, 2008 - link

Did you report this to nvidia:http://www.nvidia.com/object/vistaqualityassurance...">http://www.nvidia.com/object/vistaqualityassurance...

Inkjammer - Tuesday, March 25, 2008 - link

I adopted from an 8800 GTX to a 9800 GX2, and I'm really frustrated with the drivers - there are a lot of issues with them running it in "SLI". There card has a lot of raw performance, but seeing it doubled up with two cards...Costs aside, it really seems like anything beyond 2 GPUs at this point and time is rather useless. The technology is there, but the drivers are still too immature and the rest of the tech it requires to be useful hasn't caught up to speed.